使用Python+OpenCV预测年龄与性别

点击上方“小白学视觉”,选择加"星标"或“置顶”

重磅干货,第一时间送达

本文转自:深度学习与计算机视觉

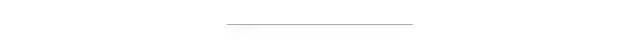

年龄和性别是人脸的两个重要属性,在社会交往中起着非常基础的作用,使得从单个人脸图像中估计年龄和性别成为智能应用中的一项重要任务,如访问控制、人机交互、执法、营销智能以及视觉监控等。

numpy

pip install pafy

pip install youtube_dl(了解更多关于youtube-dl的信息:https://rg3.github.io/youtube-dl/)

import pafyurl = 'https://www.youtube.com/watch?v=c07IsbSNqfI&feature=youtu.be'vPafy = pafy.new(url)print vPafy.titleprint vPafy.ratingprint vPafy.viewcountprint vPafy.authorprint vPafy.lengthprint vPafy.description

Testing file uploads with Postman (multipart/form-data)4.8709678649911478Valentin Despa1688➡️➡️➡️ 📢 Check my online course on Postman. Get it for only $10 (limited supply):https://www.udemy.com/postman-the-complete-guide/?couponCode=YOUTUBE10I will show you how to debug an upload script and demonstrate it with a tool that can make requests encoded as "multipart/form-data" so that you can send also a file.After this, we will go even further and write tests and begin automating the process.Here is the Git repository containing the files used for this tutorial:https://github.com/vdespa/postman-testing-file-uploads

从YouTube获取视频URL。 使用Haar级联的人脸检测 CNN的性别识别 CNN的年龄识别

文章地址:https://medium.com/analytics-vidhya/how-to-build-a-face-detection-model-in-python-8dc9cecadfe9

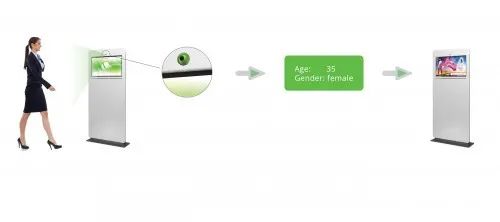

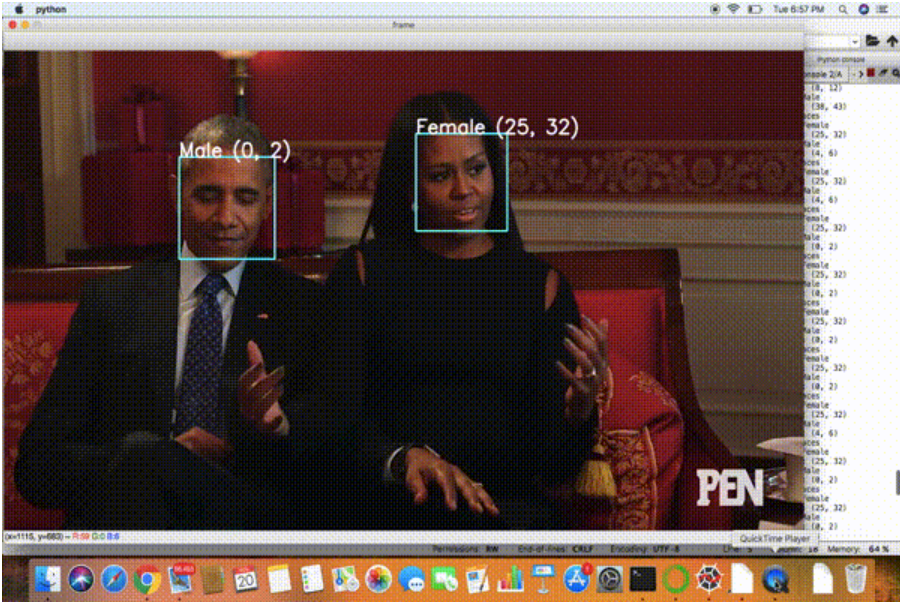

fisherfaces实现的性别识别非常流行,大家中的一些人可能也尝试过或阅读过它,但是在这个例子中,我将使用不同的方法来识别性别。2015年,以色列两名研究人员Gil Levi和Tal Hassner引入了一种模型,在这个例子中使用了他们训练的CNN模型。我们将使用OpenCV的dnn包,它代表“深度神经网络”。import cv2import numpy as npimport pafy#url of the video to predict Age and genderurl = 'https://www.youtube.com/watch?v=c07IsbSNqfI&feature=youtu.be'vPafy = pafy.new(url)play = vPafy.getbest(preftype="mp4")cap = cv2.VideoCapture(play.url)cap.set(3, 480) #set width of the framecap.set(4, 640) #set height of the frameMODEL_MEAN_VALUES = (78.4263377603, 87.7689143744, 114.895847746)age_list = ['(0, 2)', '(4, 6)', '(8, 12)', '(15, 20)', '(25, 32)', '(38, 43)', '(48, 53)', '(60, 100)']gender_list = ['Male', 'Female']def load_caffe_models():age_net = cv2.dnn.readNetFromCaffe('deploy_age.prototxt', 'age_net.caffemodel')gender_net = cv2.dnn.readNetFromCaffe('deploy_gender.prototxt', 'gender_net.caffemodel')return(age_net, gender_net)def video_detector(age_net, gender_net):font = cv2.FONT_HERSHEY_SIMPLEXwhile True:ret, image = cap.read()face_cascade = cv2.CascadeClassifier('haarcascade_frontalface_alt.xml')gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY)faces = face_cascade.detectMultiScale(gray, 1.1, 5)if(len(faces)>0):print("Found {} faces".format(str(len(faces))))for (x, y, w, h )in faces:cv2.rectangle(image, (x, y), (x+w, y+h), (255, 255, 0), 2)#Get Faceface_img = image[y:y+h, h:h+w].copy()blob = cv2.dnn.blobFromImage(face_img, 1, (227, 227), MODEL_MEAN_VALUES, swapRB=False)#Predict Gendergender_net.setInput(blob)gender_preds = gender_net.forward()gender = gender_list[gender_preds[0].argmax()]print("Gender : " + gender)#Predict Ageage_net.setInput(blob)age_preds = age_net.forward()age = age_list[age_preds[0].argmax()]print("Age Range: " + age)overlay_text = "%s %s" % (gender, age)cv2.putText(image, overlay_text, (x, y), font, 1, (255, 255, 255), 2, cv2.LINE_AA)cv2.imshow('frame', image)#0xFF is a hexadecimal constant which is 11111111 in binary.if cv2.waitKey(1) & 0xFF == ord('q'):breakif __name__ == "__main__":age_net, gender_net = load_caffe_models()video_detector(age_net, gender_net)

import cv2import numpy as npimport pafy

url = 'https://www.youtube.com/watch?v=c07IsbSNqfI&feature=youtu.be'vPafy = pafy.new(url)play = vPafy.getbest(preftype="mp4")

cap = cv2.VideoCapture(0) #if you are using webcam

cap = cv2.VideoCapture(play.url)

cap.set(3, 480) #set width of the framecap.set(4, 640) #set height of the frame

MODEL_MEAN_VALUES = (78.4263377603, 87.7689143744, 114.895847746)age_list = ['(0, 2)', '(4, 6)', '(8, 12)', '(15, 20)', '(25, 32)', '(38, 43)', '(48, 53)', '(60, 100)']gender_list = ['Male', 'Female']

def load_caffe_models():age_net = cv2.dnn.readNetFromCaffe('deploy_age.prototxt', 'age_net.caffemodel')gender_net = cv2.dnn.readNetFromCaffe('deploy_gender.prototxt', 'gender_net.caffemodel')return(age_net, gender_net)

if __name__ == "__main__":age_net, gender_net = load_caffe_models()video_detector(age_net, gender_net)

ret, image = cap.read()

gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY)

face_cascade = cv2.CascadeClassifier('haarcascade_frontalface_alt.xml')

detectMultiScale(image, scaleFactor, minNeighbors)

Image:第一个输入是灰度图像。 scaleFactor:这个函数补偿当一张脸看起来比另一张脸大时发生的大小错误感知,因为它更靠近相机。 minNeighbors:一种使用移动窗口检测对象的检测算法,它通过定义在当前窗口附近找到多少个对象,然后才能声明找到的人脸。

faces = face_cascade.detectMultiScale(gray, 1.1, 5)

for (x, y, w, h )in faces:cv2.rectangle(image, (x, y), (x+w, y+h), (255, 255, 0), 2)# Get Faceface_img = image[y:y+h, h:h+w].copy()

缩放比例

和可选的通道交换

blob = cv2.dnn.blobFromImage(image, scalefactor=1.0, size, mean, swapRB=True)

image:这是输入图像,我们要先对其进行预处理,然后再通过我们的深度神经网络进行分类。 scale factor: 在我们执行平均值减法之后,我们可以选择按某个因子缩放图像。这个值默认为1.0(即没有缩放),但我们也可以提供另一个值。还要注意的是,比例因子应该是1/σ,因为我们实际上是将输入通道(在平均值减去之后)乘以比例因子。 size: 这里我们提供卷积神经网络所期望的空间大小。对于大多数目前最先进的神经网络来说,这可能是224×224、227×227或299×299。 mean:这是我们的平均减法值。它们可以是RGB方法的3元组,也可以是单个值,在这种情况下,从图像的每个通道中减去提供的值。如果要执行平均值减法,请确保按(R,G,B)顺序提供3元组,特别是在使用swapRB=True的默认行为时。 swapRB:OpenCV假设图像是BGR通道顺序的,但是平均值假设我们使用的是RGB顺序。为了解决这个差异,我们可以通过将这个值设置为True来交换图像中的R和B通道。默认情况下,OpenCV为我们执行此通道交换。

blob = cv2.dnn.blobFromImage(face_img, 1, (227, 227), MODEL_MEAN_VALUES, swapRB=False)

#Predict Gendergender_net.setInput(blob)gender_preds = gender_net.forward()gender = gender_list[gender_preds[0].argmax()]

#Predict Ageage_net.setInput(blob)age_preds = age_net.forward()age = age_list[age_preds[0].argmax()]

要写入的文本数据 放置位置坐标(即数据开始的左下角)。 字体类型(请检查cv2.putText()文档以获取支持的字体) 字体比例(指定字体大小) 常规的东西,如颜色,厚度,线型等。为了更好的外观,推荐使用线型=cv2.LINE_AA。

overlay_text = "%s %s" % (gender, age)cv2.putText(image, overlay_text, (x, y), font, 1, (255, 255, 255), 2, cv2.LINE_AA)

cv2.imshow('frame', image)

if cv2.waitKey(1) & 0xFF == ord('q'):break

0xFF进行比较,为了删除底部8位以上的任何内容,并将结果与字母q的ASCII码进行比较,这意味着用户已决定通过按键盘上的q键退出。

交流群

欢迎加入公众号读者群一起和同行交流,目前有SLAM、三维视觉、传感器、自动驾驶、计算摄影、检测、分割、识别、医学影像、GAN、算法竞赛等微信群(以后会逐渐细分),请扫描下面微信号加群,备注:”昵称+学校/公司+研究方向“,例如:”张三 + 上海交大 + 视觉SLAM“。请按照格式备注,否则不予通过。添加成功后会根据研究方向邀请进入相关微信群。请勿在群内发送广告,否则会请出群,谢谢理解~

评论