当代研究生应当掌握的5种Pytorch并行训练方法(单机多卡)

点击上方“程序员大白”,选择“星标”公众号

重磅干货,第一时间送达

导读

利用PyTorch,作者编写了不同加速库在ImageNet上的单机多卡使用示例,方便读者取用。

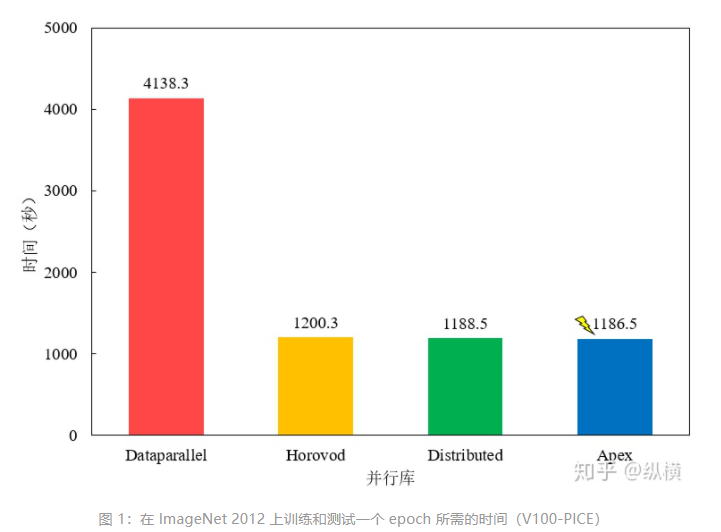

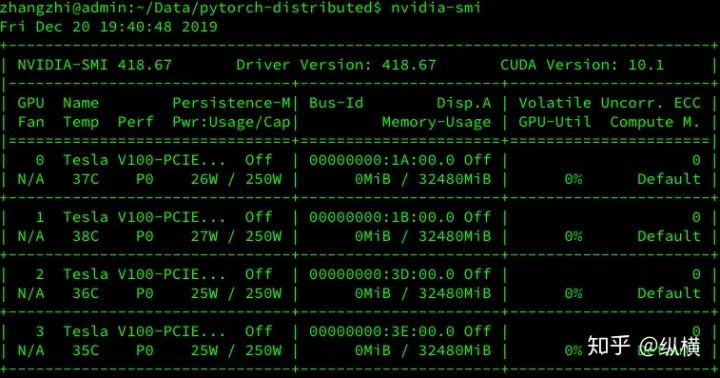

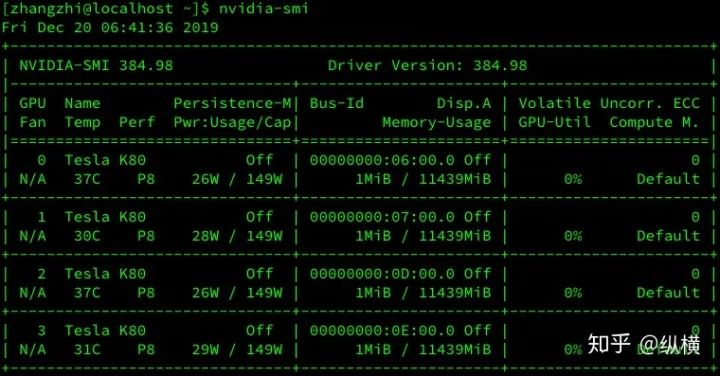

又到适宜划水的周五啦,机器在学习,人很无聊。在打开 b 站 “学习” 之前看着那空着一半的显卡决定写点什么喂饱它们~因此,从 V100-PICE/V100/K80 中各拿出 4 张卡,试验一下哪种分布式学习库速度最快!这下终于能把剩下的显存吃完啦,又是老师的勤奋好学生啦(我真是个小机灵鬼)!

Take-Away

笔者使用 PyTorch 编写了不同加速库在 ImageNet 上的使用示例(单机多卡),需要的同学可以当作 quickstart 将需要的部分 copy 到自己的项目中(Github 请点击下面链接):

1、简单方便的 nn.DataParallel

https://github.com/tczhangzhi/pytorch-distributed/blob/master/dataparallel.py

2、使用 torch.distributed 加速并行训练

https://github.com/tczhangzhi/pytorch-distributed/blob/master/distributed.py

3、使用 torch.multiprocessing 取代启动器

https://github.com/tczhangzhi/pytorch-distributed/blob/master/multiprocessing_distributed.py

4、使用 apex 再加速

https://github.com/tczhangzhi/pytorch-distributed/blob/master/apex_distributed.py

5、horovod 的优雅实现

https://github.com/tczhangzhi/pytorch-distributed/blob/master/horovod_distributed.py

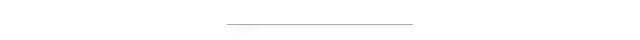

这里,笔者记录了使用 4 块 Tesla V100-PICE 在 ImageNet 进行了运行时间的测试,测试结果发现 Apex 的加速效果最好,但与 Horovod/Distributed 差别不大,平时可以直接使用内置的 Distributed。Dataparallel 较慢,不推荐使用。(后续会补上 V100/K80 上的测试结果,穿插了一些试验所以中断了)

简要记录一下不同库的分布式训练方式,当作代码的 README(我真是个小机灵鬼)~

简单方便的 nn.DataParallel

DataParallel 可以帮助我们(使用单进程控)将模型和数据加载到多个 GPU 中,控制数据在 GPU 之间的流动,协同不同 GPU 上的模型进行并行训练(细粒度的方法有 scatter,gather 等等)。

DataParallel 使用起来非常方便,我们只需要用 DataParallel 包装模型,再设置一些参数即可。需要定义的参数包括:参与训练的 GPU 有哪些,device_ids=gpus;用于汇总梯度的 GPU 是哪个,output_device=gpus[0] 。DataParallel 会自动帮我们将数据切分 load 到相应 GPU,将模型复制到相应 GPU,进行正向传播计算梯度并汇总:

model = nn.DataParallel(model.cuda(), device_ids=gpus, output_device=gpus[0])值得注意的是,模型和数据都需要先 load 进 GPU 中,DataParallel 的 module 才能对其进行处理,否则会报错:

# 这里要 model.cuda()

model = nn.DataParallel(model.cuda(), device_ids=gpus, output_device=gpus[0])

for epoch in range(100):

for batch_idx, (data, target) in enumerate(train_loader):

# 这里要 images/target.cuda()

images = images.cuda(non_blocking=True)

target = target.cuda(non_blocking=True)

...

output = model(images)

loss = criterion(output, target)

...

optimizer.zero_grad()

loss.backward()

optimizer.step()# main.py

import torch

import torch.distributed as dist

gpus = [0, 1, 2, 3]

torch.cuda.set_device('cuda:{}'.format(gpus[0]))

train_dataset = ...

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=...)

model = ...

model = nn.DataParallel(model.to(device), device_ids=gpus, output_device=gpus[0])

optimizer = optim.SGD(model.parameters())

for epoch in range(100):

for batch_idx, (data, target) in enumerate(train_loader):

images = images.cuda(non_blocking=True)

target = target.cuda(non_blocking=True)

...

output = model(images)

loss = criterion(output, target)

...

optimizer.zero_grad()

loss.backward()

optimizer.step()python main.py使用 torch.distributed 加速并行训练

在 pytorch 1.0 之后,官方终于对分布式的常用方法进行了封装,支持 all-reduce,broadcast,send 和 receive 等等。通过 MPI 实现 CPU 通信,通过 NCCL 实现 GPU 通信。官方也曾经提到用 DistributedDataParallel 解决 DataParallel 速度慢,GPU 负载不均衡的问题,目前已经很成熟了~

parser = argparse.ArgumentParser()

parser.add_argument('--local_rank', default=-1, type=int,

help='node rank for distributed training')

args = parser.parse_args()

print(args.local_rank)dist.init_process_group(backend='nccl')train_sampler = torch.utils.data.distributed.DistributedSampler(train_dataset)

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=..., sampler=train_sampler)model = torch.nn.parallel.DistributedDataParallel(model, device_ids=[args.local_rank])torch.cuda.set_device(args.local_rank)

model.cuda()

for epoch in range(100):

for batch_idx, (data, target) in enumerate(train_loader):

images = images.cuda(non_blocking=True)

target = target.cuda(non_blocking=True)

...

output = model(images)

loss = criterion(output, target)

...

optimizer.zero_grad()

loss.backward()

optimizer.step()

# main.py

import torch

import argparse

import torch.distributed as dist

parser = argparse.ArgumentParser()

parser.add_argument('--local_rank', default=-1, type=int,

help='node rank for distributed training')

args = parser.parse_args()

dist.init_process_group(backend='nccl')

torch.cuda.set_device(args.local_rank)

train_dataset = ...

train_sampler = torch.utils.data.distributed.DistributedSampler(train_dataset)

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=..., sampler=train_sampler)

model = ...

model = torch.nn.parallel.DistributedDataParallel(model, device_ids=[args.local_rank])

optimizer = optim.SGD(model.parameters())

for epoch in range(100):

for batch_idx, (data, target) in enumerate(train_loader):

images = images.cuda(non_blocking=True)

target = target.cuda(non_blocking=True)

...

output = model(images)

loss = criterion(output, target)

...

optimizer.zero_grad()

loss.backward()

optimizer.step()CUDA_VISIBLE_DEVICES=0,1,2,3 python -m torch.distributed.launch --nproc_per_node=4 main.py使用 torch.multiprocessing 取代启动器

有的同学可能比较熟悉 torch.multiprocessing,也可以手动使用 torch.multiprocessing 进行多进程控制。绕开 torch.distributed.launch 自动控制开启和退出进程的一些小毛病~

import torch.multiprocessing as mp

mp.spawn(main_worker, nprocs=4, args=(4, myargs))def main_worker(proc, ngpus_per_node, args):

dist.init_process_group(backend='nccl', init_method='tcp://127.0.0.1:23456', world_size=4, rank=gpu)

torch.cuda.set_device(args.local_rank)

train_dataset = ...

train_sampler = torch.utils.data.distributed.DistributedSampler(train_dataset)

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=..., sampler=train_sampler)

model = ...

model = torch.nn.parallel.DistributedDataParallel(model, device_ids=[args.local_rank])

optimizer = optim.SGD(model.parameters())

for epoch in range(100):

for batch_idx, (data, target) in enumerate(train_loader):

images = images.cuda(non_blocking=True)

target = target.cuda(non_blocking=True)

...

output = model(images)

loss = criterion(output, target)

...

optimizer.zero_grad()

loss.backward()

optimizer.step()dist.init_process_group(backend='nccl', init_method='tcp://127.0.0.1:23456', world_size=4, rank=gpu)# main.py

import torch

import torch.distributed as dist

import torch.multiprocessing as mp

mp.spawn(main_worker, nprocs=4, args=(4, myargs))

def main_worker(proc, ngpus_per_node, args):

dist.init_process_group(backend='nccl', init_method='tcp://127.0.0.1:23456', world_size=4, rank=gpu)

torch.cuda.set_device(args.local_rank)

train_dataset = ...

train_sampler = torch.utils.data.distributed.DistributedSampler(train_dataset)

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=..., sampler=train_sampler)

model = ...

model = torch.nn.parallel.DistributedDataParallel(model, device_ids=[args.local_rank])

optimizer = optim.SGD(model.parameters())

for epoch in range(100):

for batch_idx, (data, target) in enumerate(train_loader):

images = images.cuda(non_blocking=True)

target = target.cuda(non_blocking=True)

...

output = model(images)

loss = criterion(output, target)

...

optimizer.zero_grad()

loss.backward()

optimizer.step()python main.py使用 Apex 再加速

Apex 是 NVIDIA 开源的用于混合精度训练和分布式训练库。Apex 对混合精度训练的过程进行了封装,改两三行配置就可以进行混合精度的训练,从而大幅度降低显存占用,节约运算时间。此外,Apex 也提供了对分布式训练的封装,针对 NVIDIA 的 NCCL 通信库进行了优化。

from apex import amp

model, optimizer = amp.initialize(model, optimizer)from apex.parallel import DistributedDataParallel

model = DistributedDataParallel(model)

# # torch.distributed

# model = torch.nn.parallel.DistributedDataParallel(model, device_ids=[args.local_rank])

# model = torch.nn.parallel.DistributedDataParallel(model, device_ids=[args.local_rank], output_device=args.local_rank)with amp.scale_loss(loss, optimizer) as scaled_loss:

scaled_loss.backward()# main.py

import torch

import argparse

import torch.distributed as dist

from apex.parallel import DistributedDataParallel

parser = argparse.ArgumentParser()

parser.add_argument('--local_rank', default=-1, type=int,

help='node rank for distributed training')

args = parser.parse_args()

dist.init_process_group(backend='nccl')

torch.cuda.set_device(args.local_rank)

train_dataset = ...

train_sampler = torch.utils.data.distributed.DistributedSampler(train_dataset)

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=..., sampler=train_sampler)

model = ...

model, optimizer = amp.initialize(model, optimizer)

model = DistributedDataParallel(model, device_ids=[args.local_rank])

optimizer = optim.SGD(model.parameters())

for epoch in range(100):

for batch_idx, (data, target) in enumerate(train_loader):

images = images.cuda(non_blocking=True)

target = target.cuda(non_blocking=True)

...

output = model(images)

loss = criterion(output, target)

optimizer.zero_grad()

with amp.scale_loss(loss, optimizer) as scaled_loss:

scaled_loss.backward()

optimizer.step()UDA_VISIBLE_DEVICES=0,1,2,3 python -m torch.distributed.launch --nproc_per_node=4 main.pyHorovod 的优雅实现

Horovod 是 Uber 开源的深度学习工具,它的发展吸取了 Facebook "Training ImageNet In 1 Hour" 与百度 "Ring Allreduce" 的优点,可以无痛与 PyTorch/Tensorflow 等深度学习框架结合,实现并行训练。

import horovod.torch as hvd

hvd.local_rank()hvd.init()train_sampler = torch.utils.data.distributed.DistributedSampler(train_dataset)

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=..., sampler=train_sampler)

hvd.broadcast_parameters(model.state_dict(), root_rank=0)hvd.DistributedOptimizer(optimizer, named_parameters=model.named_parameters(), compression=hvd.Compression.fp16)torch.cuda.set_device(args.local_rank)

for epoch in range(100):

for batch_idx, (data, target) in enumerate(train_loader):

images = images.cuda(non_blocking=True)

target = target.cuda(non_blocking=True)

...

output = model(images)

loss = criterion(output, target)

...

optimizer.zero_grad()

loss.backward()

optimizer.step()# main.py

import torch

import horovod.torch as hvd

hvd.init()

torch.cuda.set_device(hvd.local_rank())

train_dataset = ...

train_sampler = torch.utils.data.distributed.DistributedSampler(

train_dataset, num_replicas=hvd.size(), rank=hvd.rank())

train_loader = torch.utils.data.DataLoader(train_dataset, batch_size=..., sampler=train_sampler)

model = ...

model.cuda()

optimizer = optim.SGD(model.parameters())

optimizer = hvd.DistributedOptimizer(optimizer, named_parameters=model.named_parameters())

hvd.broadcast_parameters(model.state_dict(), root_rank=0)

for epoch in range(100):

for batch_idx, (data, target) in enumerate(train_loader):

images = images.cuda(non_blocking=True)

target = target.cuda(non_blocking=True)

...

output = model(images)

loss = criterion(output, target)

...

optimizer.zero_grad()

loss.backward()

optimizer.step()CUDA_VISIBLE_DEVICES=0,1,2,3 horovodrun -np 4 -H localhost:4 --verbose python main.py

尾注

推荐阅读

关于程序员大白

程序员大白是一群哈工大,东北大学,西湖大学和上海交通大学的硕士博士运营维护的号,大家乐于分享高质量文章,喜欢总结知识,欢迎关注[程序员大白],大家一起学习进步!