一些目标检测技巧

点击上方“机器学习与生成对抗网络”,关注星标

获取有趣、好玩的前沿干货!

本文转自:视学算法

源码在mmdet/datasets/extra_aug.py里面,包括RandomCrop、brightness、contrast、saturation、ExtraAugmentation等等图像增强方法。

添加位置是train_pipeline或test_pipeline这个地方(一般train进行增强而test不需要),例如数据增强RandomFlip,flip_ratio代表随机翻转的概率:

train_pipeline = [dict(type='LoadImageFromFile'),dict(type='LoadAnnotations', with_bbox=True),dict(type='Resize', img_scale=(1333, 800), keep_ratio=True),dict(type='RandomFlip', flip_ratio=0.5),dict(type='Normalize', **img_norm_cfg),dict(type='Pad', size_divisor=32),dict(type='DefaultFormatBundle'),dict(type='Collect', keys=['img', 'gt_bboxes', 'gt_labels']),]test_pipeline = [dict(type='LoadImageFromFile'),dict(type='MultiScaleFlipAug',img_scale=(1333, 800),flip=False,transforms=[dict(type='Resize', keep_ratio=True),dict(type='RandomFlip'),dict(type='Normalize', **img_norm_cfg),dict(type='Pad', size_divisor=32),dict(type='ImageToTensor', keys=['img']),dict(type='Collect', keys=['img']),])]

源码在mmdet/datasets/custom.py里面,增强源码为:

def pre_pipeline(self, results): results['img_prefix'] = self.img_prefix results['seg_prefix'] = self.seg_prefix results['proposal_file'] = self.proposal_file results['bbox_fields'] = [] results['mask_fields'] = []

train_pipeline = [dict(type='LoadImageFromFile'),dict(type='LoadAnnotations', with_bbox=True),dict(type='Resize', img_scale=(1333, 800), keep_ratio=True), #这里可以更换多尺度[(),()]dict(type='RandomFlip', flip_ratio=0.5),dict(type='Normalize', **img_norm_cfg),dict(type='Pad', size_divisor=32),dict(type='DefaultFormatBundle'),dict(type='Collect', keys=['img', 'gt_bboxes', 'gt_labels']),]test_pipeline = [dict(type='LoadImageFromFile'),dict(type='MultiScaleFlipAug',img_scale=(1333, 800),flip=False,transforms=[dict(type='Resize', keep_ratio=True),dict(type='RandomFlip'),dict(type='Normalize', **img_norm_cfg),dict(type='Pad', size_divisor=32),dict(type='ImageToTensor', keys=['img']),dict(type='Collect', keys=['img']),])]

box voting 的阈值,

不同的输入中这个框至少出现了几次来允许它输出,

得分的阈值,一个目标框的得分低于这个阈值的时候,就删掉这个目标框。

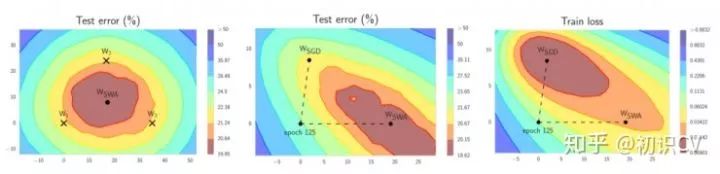

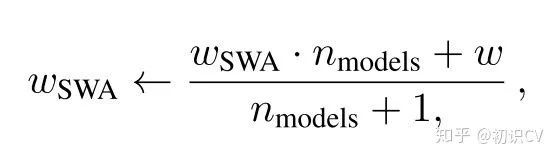

第一个模型保存模型权值的平均值(WSWA)。在训练结束后,它将是用于预测的最终模型。

第二个模型(W)将穿过权值空间,基于周期性学习率规划探索权重空间。

rcnn=[ dict( assigner=dict( type='MaxIoUAssigner', pos_iou_thr=0.4, # 更换 neg_iou_thr=0.4, min_pos_iou=0.4, ignore_iof_thr=-1), sampler=dict( type='OHEMSampler', num=512, pos_fraction=0.25, neg_pos_ub=-1, add_gt_as_proposals=True), pos_weight=-1, debug=False), dict( assigner=dict( type='MaxIoUAssigner', pos_iou_thr=0.5, neg_iou_thr=0.5, min_pos_iou=0.5, ignore_iof_thr=-1), sampler=dict( type='OHEMSampler', # 解决难易样本,也解决了正负样本比例问题。num=512, pos_fraction=0.25, neg_pos_ub=-1, add_gt_as_proposals=True), pos_weight=-1, debug=False), dict( assigner=dict( type='MaxIoUAssigner', pos_iou_thr=0.6, neg_iou_thr=0.6, min_pos_iou=0.6, ignore_iof_thr=-1), sampler=dict( type='OHEMSampler', num=512, pos_fraction=0.25, neg_pos_ub=-1, add_gt_as_proposals=True), pos_weight=-1, debug=False) ], stage_loss_weights=[1, 0.5, 0.25])

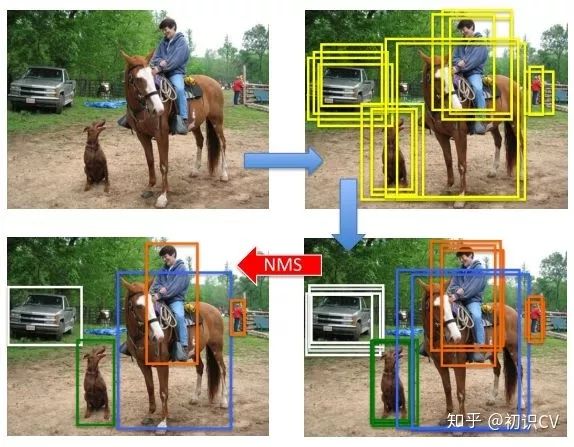

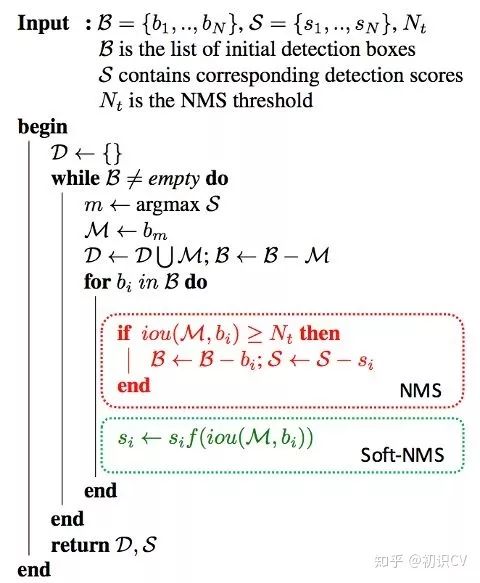

即为软化函数,通常取线性或高斯函数,后者效果稍好一些。当然,在享受这一增益的同时,Soft-NMS也引入了一些超参,对不同的数据集需要试探以确定最佳配置。

即为软化函数,通常取线性或高斯函数,后者效果稍好一些。当然,在享受这一增益的同时,Soft-NMS也引入了一些超参,对不同的数据集需要试探以确定最佳配置。test_cfg = dict( rpn=dict( nms_across_levels=False, nms_pre=1000, nms_post=1000, max_num=1000, nms_thr=0.7, min_bbox_size=0), rcnn=dict( score_thr=0.05, nms=dict(type='nms', iou_thr=0.5), max_per_img=20)) # 这里可以换为sof_tnms更好的先验(YOLOv2):使用聚类方法统计数据中box标注的大小和长宽比,以更好的设置anchor box的生成配置

更好的pre-train模型:检测模型的基础网络通常使用ImageNet(通常是ImageNet-1k)上训练好的模型进行初始化,使用更大的数据集(ImageNet-5k)预训练基础网络对精度的提升亦有帮助

超参数的调整:部分工作也发现如NMS中IoU阈值的调整(从0.3到0.5)也有利于精度的提升,但这一方面尚无最佳配置参照

1.各部分代码解析

1.1 faster_rcnn_r50_fpn_1x.py:

# model settingsmodel = dict(type='FasterRCNN', # model类型pretrained='modelzoo://resnet50', # 预训练模型:imagenet-resnet50backbone=dict(type='ResNet', # backbone类型depth=50, # 网络层数num_stages=4, # resnet的stage数量out_indices=(0, 1, 2, 3), # 输出的stage的序号frozen_stages=1, # 冻结的stage数量,即该stage不更新参数,-1表示所有的stage都更新参数style='pytorch'), # 网络风格:如果设置pytorch,则stride为2的层是conv3x3的卷积层;如果设置caffe,则stride为2的层是第一个conv1x1的卷积层neck=dict(type='FPN', # neck类型in_channels=[256, 512, 1024, 2048], # 输入的各个stage的通道数out_channels=256, # 输出的特征层的通道数num_outs=5), # 输出的特征层的数量rpn_head=dict(type='RPNHead', # RPN网络类型in_channels=256, # RPN网络的输入通道数feat_channels=256, # 特征层的通道数anchor_scales=[8], # 生成的anchor的baselen,baselen = sqrt(w*h),w和h为anchor的宽和高anchor_ratios=[0.5, 1.0, 2.0], # anchor的宽高比anchor_strides=[4, 8, 16, 32, 64], # 在每个特征层上的anchor的步长(对应于原图)target_means=[.0, .0, .0, .0], # 均值target_stds=[1.0, 1.0, 1.0, 1.0], # 方差use_sigmoid_cls=True), # 是否使用sigmoid来进行分类,如果False则使用softmax来分类bbox_roi_extractor=dict(type='SingleRoIExtractor', # RoIExtractor类型roi_layer=dict(type='RoIAlign', out_size=7, sample_num=2), # ROI具体参数:ROI类型为ROIalign,输出尺寸为7,sample数为2out_channels=256, # 输出通道数featmap_strides=[4, 8, 16, 32]), # 特征图的步长bbox_head=dict(type='SharedFCBBoxHead', # 全连接层类型num_fcs=2, # 全连接层数量in_channels=256, # 输入通道数fc_out_channels=1024, # 输出通道数roi_feat_size=7, # ROI特征层尺寸num_classes=81, # 分类器的类别数量+1,+1是因为多了一个背景的类别target_means=[0., 0., 0., 0.], # 均值target_stds=[0.1, 0.1, 0.2, 0.2], # 方差reg_class_agnostic=False)) # 是否采用class_agnostic的方式来预测,class_agnostic表示输出bbox时只考虑其是否为前景,后续分类的时候再根据该bbox在网络中的类别得分来分类,也就是说一个框可以对应多个类别# model training and testing settingstrain_cfg = dict(rpn=dict(assigner=dict(type='MaxIoUAssigner', # RPN网络的正负样本划分pos_iou_thr=0.7, # 正样本的iou阈值neg_iou_thr=0.3, # 负样本的iou阈值min_pos_iou=0.3, # 正样本的iou最小值。如果assign给ground truth的anchors中最大的IOU低于0.3,则忽略所有的anchors,否则保留最大IOU的anchorignore_iof_thr=-1), # 忽略bbox的阈值,当ground truth中包含需要忽略的bbox时使用,-1表示不忽略sampler=dict(type='RandomSampler', # 正负样本提取器类型num=256, # 需提取的正负样本数量pos_fraction=0.5, # 正样本比例neg_pos_ub=-1, # 最大负样本比例,大于该比例的负样本忽略,-1表示不忽略add_gt_as_proposals=False), # 把ground truth加入proposal作为正样本allowed_border=0, # 允许在bbox周围外扩一定的像素pos_weight=-1, # 正样本权重,-1表示不改变原始的权重smoothl1_beta=1 / 9.0, # 平滑L1系数debug=False), # debug模式rcnn=dict(assigner=dict(type='MaxIoUAssigner', # RCNN网络正负样本划分pos_iou_thr=0.5, # 正样本的iou阈值neg_iou_thr=0.5, # 负样本的iou阈值min_pos_iou=0.5, # 正样本的iou最小值。如果assign给ground truth的anchors中最大的IOU低于0.3,则忽略所有的anchors,否则保留最大IOU的anchorignore_iof_thr=-1), # 忽略bbox的阈值,当ground truth中包含需要忽略的bbox时使用,-1表示不忽略sampler=dict(type='RandomSampler', # 正负样本提取器类型num=512, # 需提取的正负样本数量pos_fraction=0.25, # 正样本比例neg_pos_ub=-1, # 最大负样本比例,大于该比例的负样本忽略,-1表示不忽略add_gt_as_proposals=True), # 把ground truth加入proposal作为正样本pos_weight=-1, # 正样本权重,-1表示不改变原始的权重debug=False)) # debug模式test_cfg = dict(rpn=dict( # 推断时的RPN参数nms_across_levels=False, # 在所有的fpn层内做nmsnms_pre=2000, # 在nms之前保留的的得分最高的proposal数量nms_post=2000, # 在nms之后保留的的得分最高的proposal数量max_num=2000, # 在后处理完成之后保留的proposal数量nms_thr=0.7, # nms阈值min_bbox_size=0), # 最小bbox尺寸rcnn=dict(score_thr=0.05, nms=dict(type='nms', iou_thr=0.5), max_per_img=100) # max_per_img表示最终输出的det bbox数量# soft-nms is also supported for rcnn testing# e.g., nms=dict(type='soft_nms', iou_thr=0.5, min_score=0.05) # soft_nms参数)# dataset settingsdataset_type = 'CocoDataset' # 数据集类型data_root = 'data/coco/' # 数据集根目录img_norm_cfg = dict(mean=[123.675, 116.28, 103.53], std=[58.395, 57.12, 57.375], to_rgb=True) # 输入图像初始化,减去均值mean并处以方差std,to_rgb表示将bgr转为rgbdata = dict(imgs_per_gpu=2, # 每个gpu计算的图像数量workers_per_gpu=2, # 每个gpu分配的线程数train=dict(type=dataset_type, # 数据集类型ann_file=data_root + 'annotations/instances_train2017.json', # 数据集annotation路径img_prefix=data_root + 'train2017/', # 数据集的图片路径img_scale=(1333, 800), # 输入图像尺寸,最大边1333,最小边800img_norm_cfg=img_norm_cfg, # 图像初始化参数size_divisor=32, # 对图像进行resize时的最小单位,32表示所有的图像都会被resize成32的倍数flip_ratio=0.5, # 图像的随机左右翻转的概率with_mask=False, # 训练时附带maskwith_crowd=True, # 训练时附带difficult的样本with_label=True), # 训练时附带labelval=dict(type=dataset_type, # 同上ann_file=data_root + 'annotations/instances_val2017.json', # 同上img_prefix=data_root + 'val2017/', # 同上img_scale=(1333, 800), # 同上img_norm_cfg=img_norm_cfg, # 同上size_divisor=32, # 同上flip_ratio=0, # 同上with_mask=False, # 同上with_crowd=True, # 同上with_label=True), # 同上test=dict(type=dataset_type, # 同上ann_file=data_root + 'annotations/instances_val2017.json', # 同上img_prefix=data_root + 'val2017/', # 同上img_scale=(1333, 800), # 同上img_norm_cfg=img_norm_cfg, # 同上size_divisor=32, # 同上flip_ratio=0, # 同上with_mask=False, # 同上with_label=False, # 同上test_mode=True)) # 同上# optimizeroptimizer = dict(type='SGD', lr=0.02, momentum=0.9, weight_decay=0.0001) # 优化参数,lr为学习率,momentum为动量因子,weight_decay为权重衰减因子optimizer_config = dict(grad_clip=dict(max_norm=35, norm_type=2)) # 梯度均衡参数# learning policylr_config = dict(policy='step', # 优化策略warmup='linear', # 初始的学习率增加的策略,linear为线性增加warmup_iters=500, # 在初始的500次迭代中学习率逐渐增加warmup_ratio=1.0 / 3, # 起始的学习率step=[8, 11]) # 在第8和11个epoch时降低学习率checkpoint_config = dict(interval=1) # 每1个epoch存储一次模型# yapf:disablelog_config = dict(interval=50, # 每50个batch输出一次信息hooks=[dict(type='TextLoggerHook'), # 控制台输出信息的风格# dict(type='TensorboardLoggerHook')])# yapf:enable# runtime settingstotal_epochs = 12 # 最大epoch数dist_params = dict(backend='nccl') # 分布式参数log_level = 'INFO' # 输出信息的完整度级别work_dir = './work_dirs/faster_rcnn_r50_fpn_1x' # log文件和模型文件存储路径load_from = None # 加载模型的路径,None表示从预训练模型加载resume_from = None # 恢复训练模型的路径workflow = [('train', 1)] # 当前工作区名称

1.2 cascade_rcnn_r50_fpn_1x.py

# model settingsmodel = dict(type='CascadeRCNN',num_stages=3, # RCNN网络的stage数量,在faster-RCNN中为1pretrained='modelzoo://resnet50',backbone=dict(type='ResNet',depth=50,num_stages=4,out_indices=(0, 1, 2, 3),frozen_stages=1,style='pytorch'),neck=dict(type='FPN',in_channels=[256, 512, 1024, 2048],out_channels=256,num_outs=5),rpn_head=dict(type='RPNHead',in_channels=256,feat_channels=256,anchor_scales=[8],anchor_ratios=[0.5, 1.0, 2.0],anchor_strides=[4, 8, 16, 32, 64],target_means=[.0, .0, .0, .0],target_stds=[1.0, 1.0, 1.0, 1.0],use_sigmoid_cls=True),bbox_roi_extractor=dict(type='SingleRoIExtractor',roi_layer=dict(type='RoIAlign', out_size=7, sample_num=2),out_channels=256,featmap_strides=[4, 8, 16, 32]),bbox_head=[dict(type='SharedFCBBoxHead',num_fcs=2,in_channels=256,fc_out_channels=1024,roi_feat_size=7,num_classes=81,target_means=[0., 0., 0., 0.],target_stds=[0.1, 0.1, 0.2, 0.2],reg_class_agnostic=True),dict(type='SharedFCBBoxHead',num_fcs=2,in_channels=256,fc_out_channels=1024,roi_feat_size=7,num_classes=81,target_means=[0., 0., 0., 0.],target_stds=[0.05, 0.05, 0.1, 0.1],reg_class_agnostic=True),dict(type='SharedFCBBoxHead',num_fcs=2,in_channels=256,fc_out_channels=1024,roi_feat_size=7,num_classes=81,target_means=[0., 0., 0., 0.],target_stds=[0.033, 0.033, 0.067, 0.067],reg_class_agnostic=True)])# model training and testing settingstrain_cfg = dict(rpn=dict(assigner=dict(type='MaxIoUAssigner',pos_iou_thr=0.7,neg_iou_thr=0.3,min_pos_iou=0.3,ignore_iof_thr=-1),sampler=dict(type='RandomSampler',num=256,pos_fraction=0.5,neg_pos_ub=-1,add_gt_as_proposals=False),allowed_border=0,pos_weight=-1,smoothl1_beta=1 / 9.0,debug=False),rcnn=[ # 注意,这里有3个RCNN的模块,对应开头的那个RCNN的stage数量dict(assigner=dict(type='MaxIoUAssigner',pos_iou_thr=0.5,neg_iou_thr=0.5,min_pos_iou=0.5,ignore_iof_thr=-1),sampler=dict(type='RandomSampler',num=512,pos_fraction=0.25,neg_pos_ub=-1,add_gt_as_proposals=True),pos_weight=-1,debug=False),dict(assigner=dict(type='MaxIoUAssigner',pos_iou_thr=0.6,neg_iou_thr=0.6,min_pos_iou=0.6,ignore_iof_thr=-1),sampler=dict(type='RandomSampler',num=512,pos_fraction=0.25,neg_pos_ub=-1,add_gt_as_proposals=True),pos_weight=-1,debug=False),dict(assigner=dict(type='MaxIoUAssigner',pos_iou_thr=0.7,neg_iou_thr=0.7,min_pos_iou=0.7,ignore_iof_thr=-1),sampler=dict(type='RandomSampler',num=512,pos_fraction=0.25,neg_pos_ub=-1,add_gt_as_proposals=True),pos_weight=-1,debug=False)],stage_loss_weights=[1, 0.5, 0.25]) # 3个RCNN的stage的loss权重test_cfg = dict(rpn=dict(nms_across_levels=False,nms_pre=2000,nms_post=2000,max_num=2000,nms_thr=0.7,min_bbox_size=0),rcnn=dict(score_thr=0.05, nms=dict(type='nms', iou_thr=0.5), max_per_img=100),keep_all_stages=False) # 是否保留所有stage的结果# dataset settingsdataset_type = 'CocoDataset'data_root = 'data/coco/'img_norm_cfg = dict(mean=[123.675, 116.28, 103.53], std=[58.395, 57.12, 57.375], to_rgb=True)data = dict(imgs_per_gpu=2,workers_per_gpu=2,train=dict(type=dataset_type,ann_file=data_root + 'annotations/instances_train2017.json',img_prefix=data_root + 'train2017/',img_scale=(1333, 800),img_norm_cfg=img_norm_cfg,size_divisor=32,flip_ratio=0.5,with_mask=False,with_crowd=True,with_label=True),val=dict(type=dataset_type,ann_file=data_root + 'annotations/instances_val2017.json',img_prefix=data_root + 'val2017/',img_scale=(1333, 800),img_norm_cfg=img_norm_cfg,size_divisor=32,flip_ratio=0,with_mask=False,with_crowd=True,with_label=True),test=dict(type=dataset_type,ann_file=data_root + 'annotations/instances_val2017.json',img_prefix=data_root + 'val2017/',img_scale=(1333, 800),img_norm_cfg=img_norm_cfg,size_divisor=32,flip_ratio=0,with_mask=False,with_label=False,test_mode=True))# optimizeroptimizer = dict(type='SGD', lr=0.02, momentum=0.9, weight_decay=0.0001)optimizer_config = dict(grad_clip=dict(max_norm=35, norm_type=2))# learning policylr_config = dict(policy='step',warmup='linear',warmup_iters=500,warmup_ratio=1.0 / 3,step=[8, 11])checkpoint_config = dict(interval=1)# yapf:disablelog_config = dict(interval=50,hooks=[dict(type='TextLoggerHook'),# dict(type='TensorboardLoggerHook')])# yapf:enable# runtime settingstotal_epochs = 12dist_params = dict(backend='nccl')log_level = 'INFO'work_dir = './work_dirs/cascade_rcnn_r50_fpn_1x'load_from = Noneresume_from = Noneworkflow = [('train', 1)]

2.trick部分代码,cascade_rcnn_r50_fpn_1x.py:

# fp16 settingsfp16 = dict(loss_scale=512.)# model settingsmodel = dict( type='CascadeRCNN', num_stages=3, pretrained='torchvision://resnet50', backbone=dict( type='ResNet', depth=50, num_stages=4, out_indices=(0, 1, 2, 3), frozen_stages=1, style='pytorch', #dcn=dict( #在最后三个block加入可变形卷积 # modulated=False, deformable_groups=1, fallback_on_stride=False), # stage_with_dcn=(False, True, True, True) ), neck=dict( type='FPN', in_channels=[256, 512, 1024, 2048], out_channels=256, num_outs=5), rpn_head=dict( type='RPNHead', in_channels=256, feat_channels=256, anchor_scales=[8], anchor_ratios=[0.2, 0.5, 1.0, 2.0, 5.0], # 添加了0.2,5,过两天发图 anchor_strides=[4, 8, 16, 32, 64], target_means=[.0, .0, .0, .0], target_stds=[1.0, 1.0, 1.0, 1.0], loss_cls=dict( type='FocalLoss', use_sigmoid=True, loss_weight=1.0), # 修改了loss,为了调控难易样本与正负样本比例 loss_bbox=dict(type='SmoothL1Loss', beta=1.0 / 9.0, loss_weight=1.0)), bbox_roi_extractor=dict( type='SingleRoIExtractor', roi_layer=dict(type='RoIAlign', out_size=7, sample_num=2), out_channels=256, featmap_strides=[4, 8, 16, 32]), bbox_head=[ dict( type='SharedFCBBoxHead', num_fcs=2, in_channels=256, fc_out_channels=1024, roi_feat_size=7, num_classes=11, target_means=[0., 0., 0., 0.], target_stds=[0.1, 0.1, 0.2, 0.2], reg_class_agnostic=True, loss_cls=dict( type='CrossEntropyLoss', use_sigmoid=False, loss_weight=1.0), loss_bbox=dict(type='SmoothL1Loss', beta=1.0, loss_weight=1.0)), dict( type='SharedFCBBoxHead', num_fcs=2, in_channels=256, fc_out_channels=1024, roi_feat_size=7, num_classes=11, target_means=[0., 0., 0., 0.], target_stds=[0.05, 0.05, 0.1, 0.1], reg_class_agnostic=True, loss_cls=dict( type='CrossEntropyLoss', use_sigmoid=False, loss_weight=1.0), loss_bbox=dict(type='SmoothL1Loss', beta=1.0, loss_weight=1.0)), dict( type='SharedFCBBoxHead', num_fcs=2, in_channels=256, fc_out_channels=1024, roi_feat_size=7, num_classes=11, target_means=[0., 0., 0., 0.], target_stds=[0.033, 0.033, 0.067, 0.067], reg_class_agnostic=True, loss_cls=dict( type='CrossEntropyLoss', use_sigmoid=False, loss_weight=1.0), loss_bbox=dict(type='SmoothL1Loss', beta=1.0, loss_weight=1.0)) ])# model training and testing settingstrain_cfg = dict( rpn=dict( assigner=dict( type='MaxIoUAssigner', pos_iou_thr=0.7, neg_iou_thr=0.3, min_pos_iou=0.3, ignore_iof_thr=-1), sampler=dict( type='RandomSampler', num=256, pos_fraction=0.5, neg_pos_ub=-1, add_gt_as_proposals=False), allowed_border=0, pos_weight=-1, debug=False), rpn_proposal=dict( nms_across_levels=False, nms_pre=2000, nms_post=2000, max_num=2000, nms_thr=0.7, min_bbox_size=0), rcnn=[ dict( assigner=dict( type='MaxIoUAssigner', pos_iou_thr=0.4, # 更换 neg_iou_thr=0.4, min_pos_iou=0.4, ignore_iof_thr=-1), sampler=dict( type='OHEMSampler', num=512, pos_fraction=0.25, neg_pos_ub=-1, add_gt_as_proposals=True), pos_weight=-1, debug=False), dict( assigner=dict( type='MaxIoUAssigner', pos_iou_thr=0.5, neg_iou_thr=0.5, min_pos_iou=0.5, ignore_iof_thr=-1), sampler=dict( type='OHEMSampler', # 解决难易样本,也解决了正负样本比例问题。num=512, pos_fraction=0.25, neg_pos_ub=-1, add_gt_as_proposals=True), pos_weight=-1, debug=False), dict( assigner=dict( type='MaxIoUAssigner', pos_iou_thr=0.6, neg_iou_thr=0.6, min_pos_iou=0.6, ignore_iof_thr=-1), sampler=dict( type='OHEMSampler', num=512, pos_fraction=0.25, neg_pos_ub=-1, add_gt_as_proposals=True), pos_weight=-1, debug=False) ], stage_loss_weights=[1, 0.5, 0.25])test_cfg = dict( rpn=dict( nms_across_levels=False, nms_pre=1000, nms_post=1000, max_num=1000, nms_thr=0.7, min_bbox_size=0), rcnn=dict( score_thr=0.05, nms=dict(type='nms', iou_thr=0.5), max_per_img=20)) # 这里可以换为sof_tnms# dataset settingsdataset_type = 'CocoDataset'data_root = '../../data/chongqing1_round1_train1_20191223/'img_norm_cfg = dict( mean=[123.675, 116.28, 103.53], std=[58.395, 57.12, 57.375], to_rgb=True)train_pipeline = [ dict(type='LoadImageFromFile'), dict(type='LoadAnnotations', with_bbox=True), dict(type='Resize', img_scale=(492,658), keep_ratio=True), #这里可以更换多尺度[(),()] dict(type='RandomFlip', flip_ratio=0.5), dict(type='Normalize', **img_norm_cfg), dict(type='Pad', size_divisor=32), dict(type='DefaultFormatBundle'), dict(type='Collect', keys=['img', 'gt_bboxes', 'gt_labels']),]test_pipeline = [ dict(type='LoadImageFromFile'), dict( type='MultiScaleFlipAug', img_scale=(492,658), flip=False, transforms=[ dict(type='Resize', keep_ratio=True), dict(type='RandomFlip'), dict(type='Normalize', **img_norm_cfg), dict(type='Pad', size_divisor=32), dict(type='ImageToTensor', keys=['img']), dict(type='Collect', keys=['img']), ])]data = dict( imgs_per_gpu=8, # 有的同学不知道batchsize在哪修改,其实就是修改这里,每个gpu同时处理的images数目。workers_per_gpu=2, train=dict( type=dataset_type, ann_file=data_root + 'fixed_annotations.json', # 更换自己的json文件 img_prefix=data_root + 'images/', # images目录 pipeline=train_pipeline), val=dict( type=dataset_type, ann_file=data_root + 'fixed_annotations.json', img_prefix=data_root + 'images/', pipeline=test_pipeline), test=dict( type=dataset_type, ann_file=data_root + 'fixed_annotations.json', img_prefix=data_root + 'images/', pipeline=test_pipeline))# optimizeroptimizer = dict(type='SGD', lr=0.001, momentum=0.9, weight_decay=0.0001) # lr = 0.00125*batch_size,不能过大,否则梯度爆炸。optimizer_config = dict(grad_clip=dict(max_norm=35, norm_type=2))# learning policylr_config = dict( policy='step', warmup='linear', warmup_iters=500, warmup_ratio=1.0 / 3, step=[6, 12, 19])checkpoint_config = dict(interval=1)# yapf:disablelog_config = dict( interval=64, hooks=[ dict(type='TextLoggerHook'), # 控制台输出信息的风格 # dict(type='TensorboardLoggerHook') # 需要安装tensorflow and tensorboard才可以使用 ])# yapf:enable# runtime settingstotal_epochs = 20dist_params = dict(backend='nccl')log_level = 'INFO'work_dir = '../work_dirs/cascade_rcnn_r50_fpn_1x' # 日志目录load_from = '../work_dirs/cascade_rcnn_r50_fpn_1x/latest.pth' # 模型加载目录文件#load_from = '../work_dirs/cascade_rcnn_r50_fpn_1x/cascade_rcnn_r50_coco_pretrained_weights_classes_11.pth'resume_from = Noneworkflow = [('train', 1)]

猜您喜欢:

附下载 |《TensorFlow 2.0 深度学习算法实战》

评论