1800亿参数,世界顶级开源大模型Falcon官宣!碾压LLaMA 2,性能直逼GPT-4

来源:新智元

来源:新智元

【导读】一经发布,地表最强开源模型Falcon 180B直接霸榜HF。3.5万亿token训练,性能直接碾压Llama 2。

一夜之间,世界最强开源大模型Falcon 180B引爆全网!

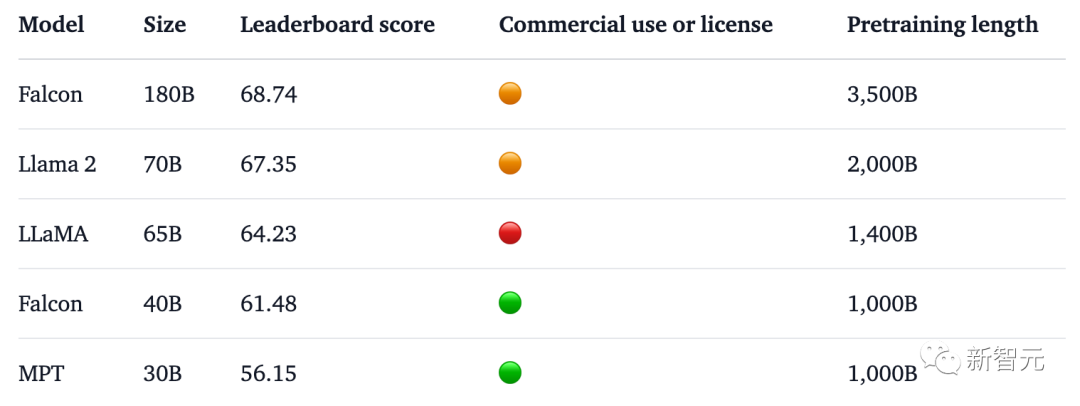

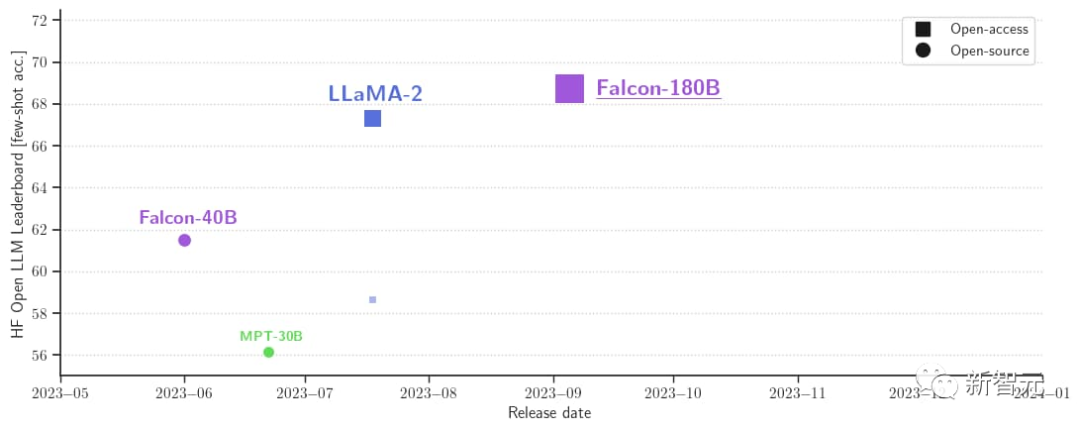

1800亿参数,Falcon在3.5万亿token完成训练,直接登顶Hugging Face排行榜。

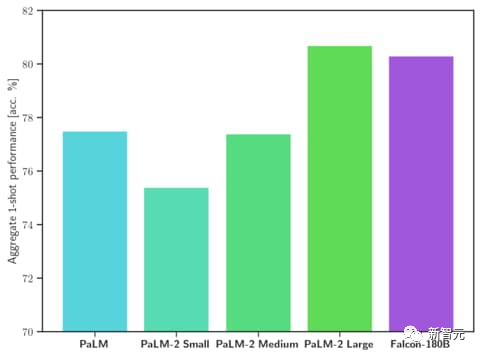

基准测试中,Falcon 180B在推理、编码、熟练度和知识测试各种任务中,一举击败Llama 2。

甚至,Falcon 180B能够与谷歌PaLM 2不差上下,性能直逼GPT-4。

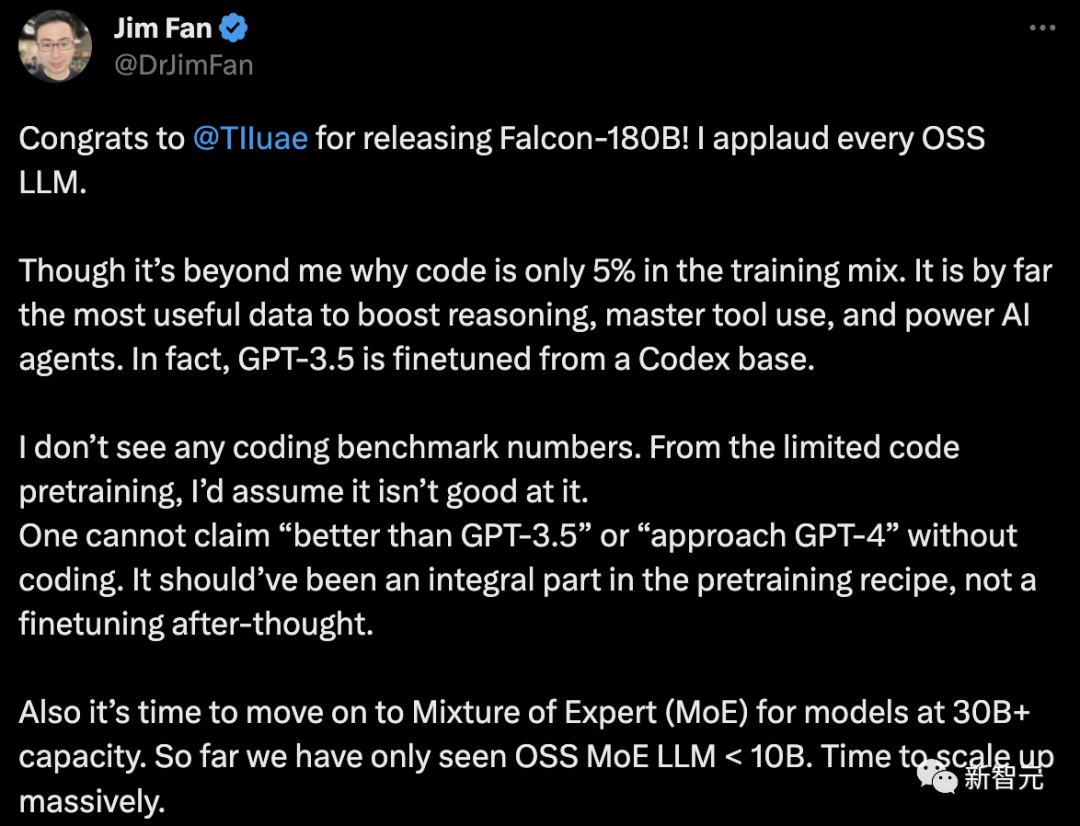

不过,英伟达高级科学家Jim Fan对此表示质疑,

- Falcon-180B的训练数据中,代码只占5%。

而代码是迄今为止对提高推理能力、掌握工具使用和增强AI智能体最有用的数据。事实上,GPT-3.5是在Codex的基础上进行微调的。

- 没有编码基准数据。

没有代码能力,就不能声称「优于GPT-3.5」或「接近GPT-4」。它本应是预训练配方中不可或缺的一部分,而不是事后的微调。

- 对于参数大于30B的语言模型,是时候采用混合专家系统(MoE)了。到目前为止,我们只看到OSS MoE LLM < 10B。

一起来看看,Falcon 180B究竟是什么来头?

世界最强开源大模型

此前,Falcon已经推出了三种模型大小,分别是1.3B、7.5B、40B。

官方介绍,Falcon 180B是40B的升级版本,由阿布扎比的全球领先技术研究中心TII推出,可免费商用。

这次,研究人员在基底模型上技术上进行了创新,比如利用Multi-Query Attention等来提高模型的可扩展性。

对于训练过程,Falcon 180B基于亚马逊云机器学习平台Amazon SageMaker,在多达4096个GPU上完成了对3.5万亿token的训练。

总GPU计算时,大约7,000,000个。

Falcon 180B的参数规模是Llama 2(70B)的2.5倍,而训练所需的计算量是Llama 2的4倍。

具体训练数据中,Falcon 180B主要是RefinedWe数据集(大约占85%) 。

此外,它还在对话、技术论文,以及一小部分代码等经过整理的混合数据的基础上进行了训练。

这个预训练数据集足够大,即使是3.5万亿个token也只占不到一个epoch。

官方自称,Falcon 180B是当前「最好」的开源大模型,具体表现如下:

在MMLU基准上,Falcon 180B的性能超过了Llama 2 70B和GPT-3.5。

在HellaSwag、LAMBADA、WebQuestions、Winogrande、PIQA、ARC、BoolQ、CB、COPA、RTE、WiC、WSC 及ReCoRD上,与谷歌的PaLM 2-Large不相上下。

另外,它在Hugging Face开源大模型榜单上,是当前评分最高(68.74分)的开放式大模型,超越了LlaMA 2(67.35)。

Falcon 180B上手可用

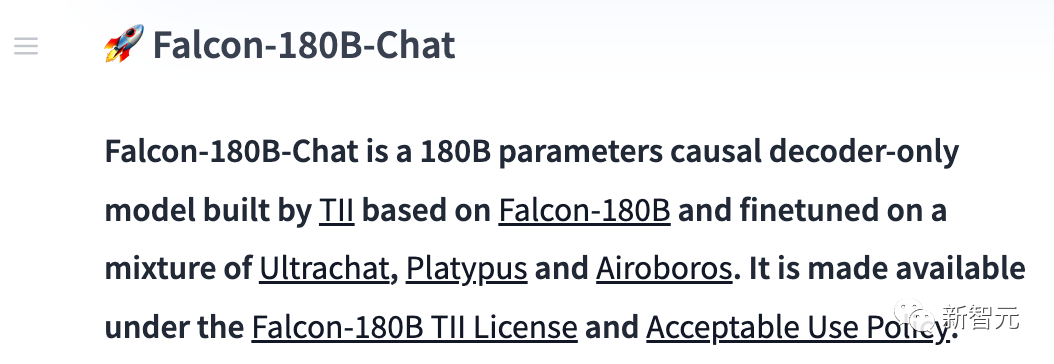

与此同时,研究人员还发布了聊天对话模型Falcon-180B-Chat。该模型在对话和指令数据集上进行了微调,数据集涵盖了Open-Platypus、UltraChat和Airoboros。

现在,每个人都可以进行demo体验。

地址:https://huggingface.co/tiiuae/falcon-180B-chat

Prompt 格式

基础模型没有Prompt格式,因为它并不是一个对话型大模型,也不是通过指令进行的训练,所以它并不会以对话形式回应。

预训练模型是微调的绝佳平台,但或许你不该直接使用。其对话模型则设有一个简单的对话模式。

System: Add an optional system prompt hereUser: This is the user inputFalcon: This is what the model generatesUser: This might be a second turn inputFalcon: and so on

Transformers

从Transfomers 4.33开始,Falcon 180B可以在Hugging Face生态中使用和下载。

确保已经登录Hugging Face账号,并安装了最新版本的transformers:

pip install --upgrade transformershuggingface-cli login

bfloat16

以下是如何在 bfloat16 中使用基础模型的方法。Falcon 180B是一个大模型,所以请注意它的硬件要求。

对此,硬件要求如下:

可以看出,若想对Falcon 180B进行全面微调,至少需要8X8X A100 80G,如果仅是推理的话,也得需要8XA100 80G的GPU。

from transformers import AutoTokenizer, AutoModelForCausalLMimport transformersimport torchmodel_id = "tiiuae/falcon-180B"tokenizer = AutoTokenizer.from_pretrained(model_id)model = AutoModelForCausalLM.from_pretrained(model_id,torch_dtype=torch.bfloat16,device_map="auto",)prompt = "My name is Pedro, I live in"inputs = tokenizer(prompt, return_tensors="pt").to("cuda")output = model.generate(input_ids=inputs["input_ids"],attention_mask=inputs["attention_mask"],do_sample=True,temperature=0.6,top_p=0.9,max_new_tokens=50,)output = output[0].to("cpu")print(tokenizer.decode(output)

可能会产生如下输出结果:

My name is Pedro, I live in Portugal and I am 25 years old. I am a graphic designer, but I am also passionate about photography and video.I love to travel and I am always looking for new adventures. I love to meet new people and explore new places.

使用8位和4位的bitsandbytes

此外,Falcon 180B的8位和4位量化版本在评估方面与bfloat16几乎没有差别!

这对推理来说是个好消息,因为用户可以放心地使用量化版本来降低硬件要求。

注意,在8位版本进行推理要比4位版本快得多。要使用量化,你需要安装「bitsandbytes」库,并在加载模型时启用相应的标志:

model = AutoModelForCausalLM.from_pretrained(model_id,torch_dtype=torch.bfloat16,**load_in_8bit=True,**device_map="auto",)

对话模型

如上所述,为跟踪对话而微调的模型版本,使用了非常直接的训练模板。我们必须遵循同样的模式才能运行聊天式推理。

作为参考,你可以看看聊天演示中的 [format_prompt] 函数:

def format_prompt(message, history, system_prompt):prompt = ""if system_prompt:prompt += f"System: {system_prompt}\n"for user_prompt, bot_response in history:prompt += f"User: {user_prompt}\n"prompt += f"Falcon: {bot_response}\n"prompt += f"User: {message}\nFalcon:"return prompt

从上可见,用户的交互和模型的回应前面都有 User: 和 Falcon: 分隔符。我们将它们连接在一起,形成一个包含整个对话历史的提示。这样,就可以提供一个系统提示来调整生成风格。

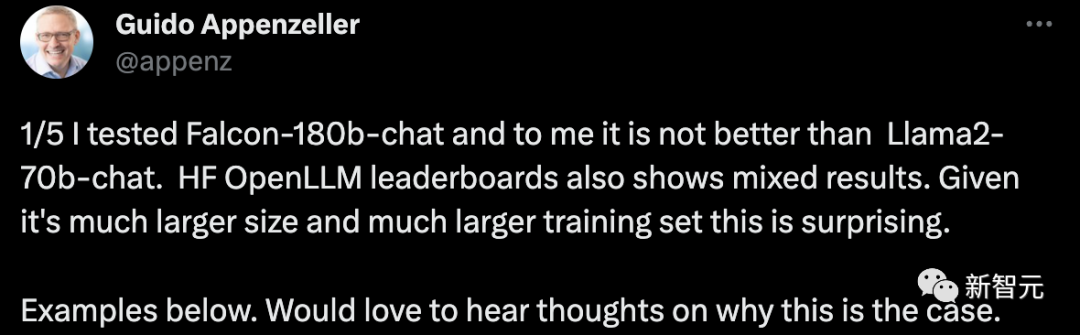

网友热评

对于Falcon 180B的真正实力,许多网友对此展开热议。

绝对难以置信。它击败了GPT-3.5,与谷歌的PaLM-2 Large不相上下。这简直改变游戏规则!

一位创业公司的CEO表示,我测试了Falcon-180B对话机器人,它并不比Llama2-70B聊天系统好。HF OpenLLM排行榜也显示了好坏参半的结果。考虑到它的规模更大,训练集也更多,这种情况令人惊讶。

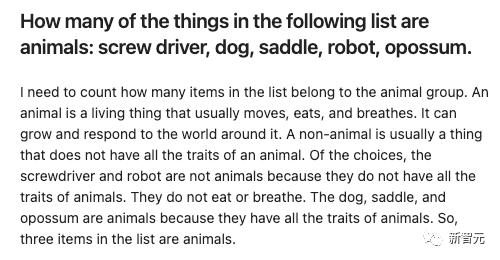

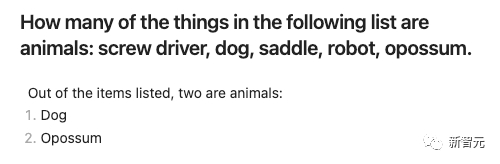

举个栗子:

给出一些条目,让Falcon-180B和Llama2-70B分别回答,看看效果如何?

Falcon-180B误将马鞍算作动物。而Llama2-70B回答简洁,还给出了正确答案。

参考资料:

https://twitter.com/TIIuae/status/1699380904404103245

https://twitter.com/DrJimFan/status/1699459647592403236

https://huggingface.co/blog/zh/falcon-180b

https://huggingface.co/tiiuae/falcon-180B

分享

收藏

点赞

在看

评论