轻松学Pytorch –车辆类型与颜色识别

重磅干货,第一时间送达

大家好,上一周没有给大家更新这个系列文章,不是我不想更新,而是很多数据需要我自己准备,做好处理,比如这次的车辆属性数据,基于BITVehicle_Dataset公开数据集的基础上,我用程序标注了9000多张车辆属性跟颜色数据集,用于本次训练。本文主要演示了如下一些知识点:

ResNet网络结构的block定义与使用

多分类任务网络设计

OpenVINO Python SDK

多模型推理的先后处理

前言中交代了,数据来自BITVehicle_Dataset,是一个公开的车辆数据集,从中可以挖掘到很多好玩的数据,它有个文件VehicleInfo.mat, 从这个文件中可以获取到车辆的标注信息,每个车辆的ROI区域,车辆类型,我用python读取了这个文件,保存了每个ROI的车辆图像,这样我就得到了车辆属性数据集。其中命名格式如下:

color_type_xxxx.jpg

color表示颜色分类,颜色有7个类别

type 表示车辆类型分类,车型只分了4个类别

1color_labels = ["white", "gray", "yellow", "red", "green", "blue", "black"]

2type_labels = ["car", "bus", "truck", "van"]同样,通过自定义的Dataset,加载数据集,实现数据集预处理与加载,这部分的内容就不再赘述了,看系列文章的前面相关内容,都有很详细的介绍。

之前系列文章中给大家演示了卷积神经网络的基本结构跟VGG的stacked卷积的基本结构,这里使用ResNet的Block结构完成了一个简单神经网络,基于该网络实现了对输入车辆图像的颜色与车辆类型的分类,完整的车辆属性识别网络结构如下:

1VehicleAttributesResNet(

2 (cnn_layers): Sequential(

3 (0): Conv2d(3, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

4 (1): ReLU()

5 (2): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

6 (3): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

7 (4): ResidualBlock(

8 (skip): Sequential(

9 (0): Conv2d(32, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

10 (1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

11 )

12 (block): Sequential(

13 (0): Conv2d(32, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

14 (1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

15 (2): ReLU()

16 (3): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

17 (4): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

18 )

19 )

20 (5): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

21 (6): ResidualBlock(

22 (skip): Sequential(

23 (0): Conv2d(64, 128, kernel_size=(1, 1), stride=(1, 1), bias=False)

24 (1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

25 )

26 (block): Sequential(

27 (0): Conv2d(64, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

28 (1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

29 (2): ReLU()

30 (3): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

31 (4): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

32 )

33 )

34 (7): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

35 )

36 (global_max_pooling): AdaptiveMaxPool2d(output_size=(1, 1))

37 (color_fc_layers): Sequential(

38 (0): Linear(in_features=128, out_features=7, bias=True)

39 (1): Sigmoid()

40 )

41 (type_fc_layers): Sequential(

42 (0): Linear(in_features=128, out_features=4, bias=True)

43 )

44)其中残差Block卷积的代码实现如下:

1class ResidualBlock(torch.nn.Module):

2 def __init__(self, in_channels, out_channels, stride=1):

3 """

4 Args:

5 in_channels (int): Number of input channels.

6 out_channels (int): Number of output channels.

7 stride (int): Controls the stride.

8 """

9 super(ResidualBlock, self).__init__()

10

11 self.skip = torch.nn.Sequential()

12

13 if stride != 1 or in_channels != out_channels:

14 self.skip = torch.nn.Sequential(

15 torch.nn.Conv2d(in_channels=in_channels, out_channels=out_channels, kernel_size=1, stride=stride, bias=False),

16 torch.nn.BatchNorm2d(out_channels))

17

18 self.block = torch.nn.Sequential(

19 torch.nn.Conv2d(in_channels=in_channels, out_channels=out_channels, kernel_size=3, padding=1, stride=1, bias=False),

20 torch.nn.BatchNorm2d(out_channels),

21 torch.nn.ReLU(),

22 torch.nn.Conv2d(in_channels=out_channels, out_channels=out_channels, kernel_size=3, padding=1, stride=1, bias=False),

23 torch.nn.BatchNorm2d(out_channels))

24

25 def forward(self, x):

26 out = self.block(x)

27 identity = self.skip(x)

28 out += identity

29 out = F.relu(out)

30 return out网络模型的代码实现如下:(上次我把模型代码忘记贴了,这次补上,上一篇代码跟此篇类似、希望大家借此可以解决一系列问题!)

1class VehicleAttributesResNet(torch.nn.Module):

2 def __init__(self):

3 super(VehicleAttributesResNet, self).__init__()

4 self.cnn_layers = torch.nn.Sequential(

5 # 卷积层 (64x64x3的图像)

6 torch.nn.Conv2d(3, 32, 3, padding=1),

7 torch.nn.ReLU(),

8 torch.nn.BatchNorm2d(32),

9 torch.nn.MaxPool2d(2, 2),

10

11 ResidualBlock(32, 64),

12 torch.nn.MaxPool2d(2, 2),

13

14 # 32x32x32

15 ResidualBlock(64, 128),

16 torch.nn.MaxPool2d(2, 2),

17 )

18 # 全局最大池化

19 self.global_max_pooling = torch.nn.AdaptiveMaxPool2d((1, 1))

20 # linear layer (N*9*9*128 ->N*128 )

21

22 self.color_fc_layers = torch.nn.Sequential(

23 torch.nn.Linear(128, 7),

24 torch.nn.Sigmoid()

25 )

26

27 self.type_fc_layers = torch.nn.Sequential(

28 torch.nn.Linear(128, 4),

29 )

30

31 def forward(self, x):

32 # stack convolution layers

33 x = self.cnn_layers(x)

34

35 # 8x8x128

36 B, C, H, W = x.size()

37 out = self.global_max_pooling(x).view(B, -1)

38

39 # 全连接层

40 out_color = self.color_fc_layers(out)

41 out_type = self.type_fc_layers(out)

42 return out_color, out_type

因为两个分支都是分类损失,所以基于交叉熵损失计算、输入的格式是NxCxHxW=Nx16x72x72,我只训练了15个epoch,然后保存模型文件为vehicle_attributes_model.pt。训练的代码如下:

1# 训练模型的次数

2num_epochs = 15

3# optimizer = torch.optim.SGD(model.parameters(), lr=0.001)

4optimizer = torch.optim.Adam(model.parameters(), lr=1e-2)

5model.train()

6

7# 损失函数

8mse_loss = torch.nn.MSELoss()

9cross_loss = torch.nn.CrossEntropyLoss()

10index = 0

11for epoch in range(num_epochs):

12 train_loss = 0.0

13 for i_batch, sample_batched in enumerate(dataloader):

14 images_batch, color_batch, type_batch = \

15 sample_batched['image'], sample_batched['color'], sample_batched['type']

16 if train_on_gpu:

17 images_batch, color_batch, type_batch = images_batch.cuda(), color_batch.cuda(), type_batch.cuda()

18 optimizer.zero_grad()

19

20 # forward pass: compute predicted outputs by passing inputs to the model

21 m_color_out_, m_type_out_ = model(images_batch)

22 color_batch = color_batch.long()

23 type_batch = type_batch.long()

24

25 # calculate the batch loss

26 loss = cross_loss(m_color_out_, color_batch) + cross_loss(m_type_out_, type_batch)

27

28 # backward pass: compute gradient of the loss with respect to model parameters

29 loss.backward()

30

31 # perform a single optimization step (parameter update)

32 optimizer.step()

33

34 # update training loss

35 train_loss += loss.item()

36 if index % 100 == 0:

37 print('step: {} \tTraining Loss: {:.6f} '.format(index, loss.item()))

38 index += 1

39

40 # 计算平均损失

41 train_loss = train_loss / num_train_samples

42

43 # 显示训练集与验证集的损失函数

44 print('Epoch: {} \tTraining Loss: {:.6f} '.format(epoch, train_loss))

45

46# save model

47model.eval()

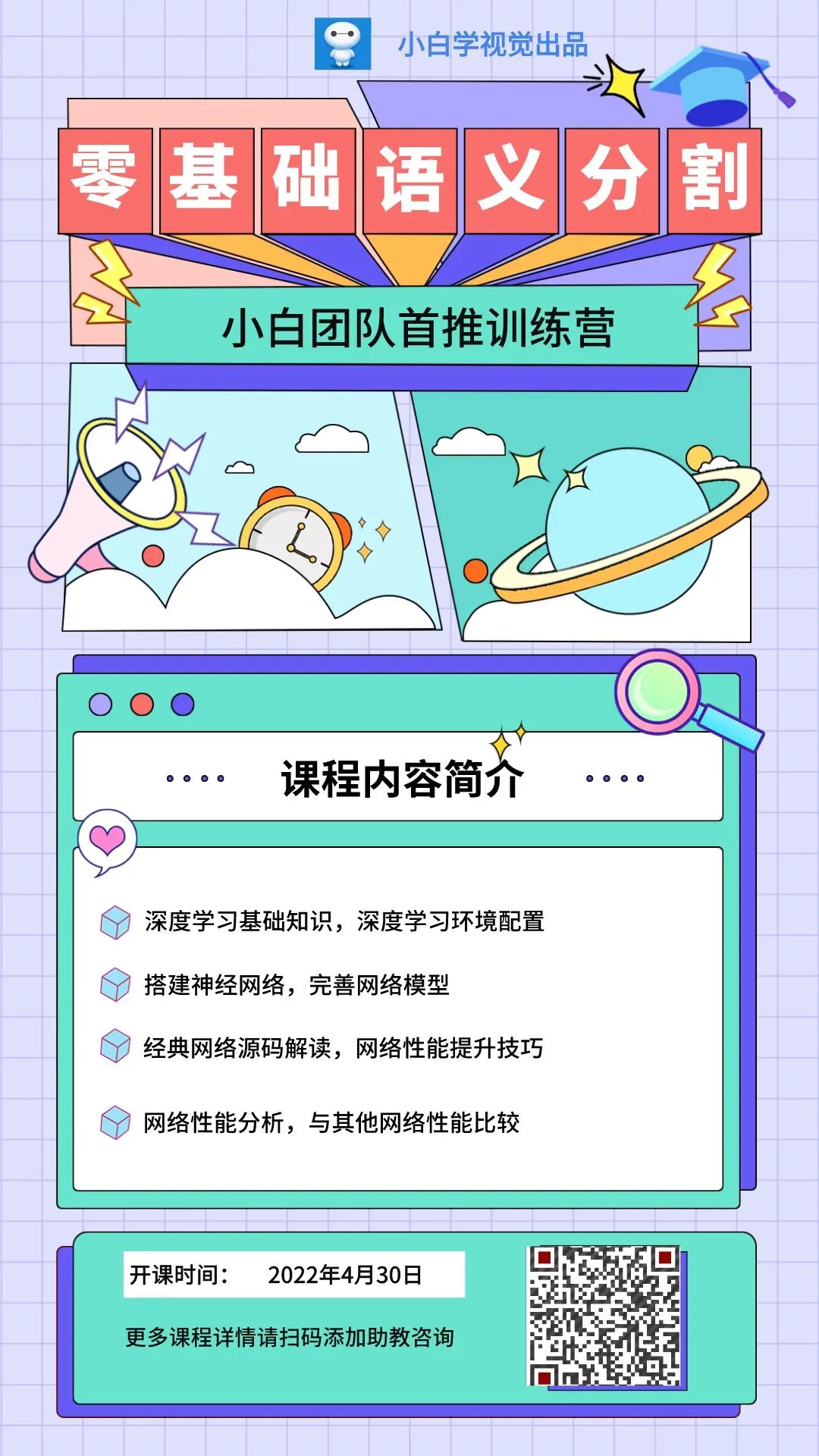

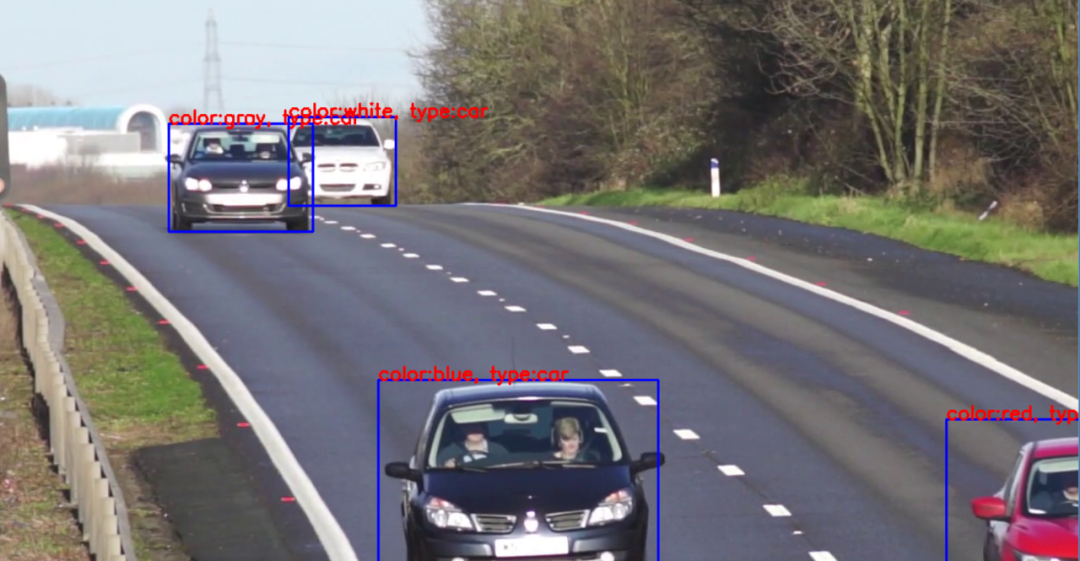

48torch.save(model, 'vehicle_attributes_model.pt')然后我使用openvino自带的车辆检测模型,实现车辆检测,在把车辆的ROI区域作为输入,使用训练好的模型,实现了车辆属性识别,最终使用一段视频,验证车辆属性识别的模型,实时运行车辆属性识别结果如下:

实现代码如下:

1while True:

2 ret, src = capture.read()

3 if ret is not True:

4 break

5 images = np.ndarray(shape=(n, c, h, w))

6 images_hw = []

7 ih, iw = src.shape[:-1]

8 images_hw.append((ih, iw))

9 if (ih, iw) != (h, w):

10 image = cv.resize(src, (w, h))

11 image = image.transpose((2, 0, 1)) # Change data layout from HWC to CHW

12 images[0] = image

13 res = exec_net.infer(inputs={input_blob: images})

14

15 # 解析车辆检测输出内容

16 res = res[out_blob]

17 license_score = []

18 license_boxes = []

19 data = res[0][0]

20 index = 0

21 for number, proposal in enumerate(data):

22 if proposal[2] > 0.75:

23 ih, iw = images_hw[0]

24 label = np.int(proposal[1])

25 confidence = proposal[2]

26 xmin = np.int(iw * proposal[3])

27 ymin = np.int(ih * proposal[4])

28 xmax = np.int(iw * proposal[5])

29 ymax = np.int(ih * proposal[6])

30 cv.rectangle(src, (xmin, ymin), (xmax, ymax), (255, 0, 0), 2)

31 if xmin < 0:

32 xmin = 0

33 if ymin < 0:

34 ymin = 0

35 if xmax >= iw:

36 xmax = iw - 1

37 if ymax >= ih:

38 ymax = ih - 1

39

40 # 车辆属性识别

41 vehicle_roi = src[ymin:ymax, xmin:xmax,:]

42 img = cv.resize(vehicle_roi, (72, 72))

43 img = (np.float32(img) / 255.0 - 0.5) / 0.5

44 img = img.transpose((2, 0, 1))

45 x_input = torch.from_numpy(img).view(1, 3, 72, 72)

46 color_, type_ = cnn_model(x_input.cuda())

47 predict_color = torch.max(color_, 1)[1].cpu().detach().numpy()[0]

48 predict_type = torch.max(type_, 1)[1].cpu().detach().numpy()[0]

49 attrs_txt = "color:%s, type:%s"%(color_labels[predict_color], type_labels[predict_type])

50 cv.putText(src, attrs_txt, (xmin, ymin), cv.FONT_HERSHEY_SIMPLEX, 0.75, (0, 0, 255), 2)

51 cv.imshow("Vehicle Attributes Recognition Demo", src)

52 res_key = cv.waitKey(1)

53 if res_key == 27:

54 break

小白团队出品:零基础精通语义分割↓↓↓

下载1:OpenCV-Contrib扩展模块中文版教程 在「小白学视觉」公众号后台回复:扩展模块中文教程,即可下载全网第一份OpenCV扩展模块教程中文版,涵盖扩展模块安装、SFM算法、立体视觉、目标跟踪、生物视觉、超分辨率处理等二十多章内容。 下载2:Python视觉实战项目52讲 在「小白学视觉」公众号后台回复:Python视觉实战项目,即可下载包括图像分割、口罩检测、车道线检测、车辆计数、添加眼线、车牌识别、字符识别、情绪检测、文本内容提取、面部识别等31个视觉实战项目,助力快速学校计算机视觉。 下载3:OpenCV实战项目20讲 在「小白学视觉」公众号后台回复:OpenCV实战项目20讲,即可下载含有20个基于OpenCV实现20个实战项目,实现OpenCV学习进阶。 交流群

欢迎加入公众号读者群一起和同行交流,目前有SLAM、三维视觉、传感器、自动驾驶、计算摄影、检测、分割、识别、医学影像、GAN、算法竞赛等微信群(以后会逐渐细分),请扫描下面微信号加群,备注:”昵称+学校/公司+研究方向“,例如:”张三 + 上海交大 + 视觉SLAM“。请按照格式备注,否则不予通过。添加成功后会根据研究方向邀请进入相关微信群。请勿在群内发送广告,否则会请出群,谢谢理解~