PyTorch版YOLOv4更新了,不仅适用于自定义数据集,还集成了注意力和MobileNet

转载自 | 计算机视觉研究院

距离YOLOV4的推出,已经过去5个多月。YOLO 框架采用C语言作为底层代码,这对于惯用Python的研究者来说,实在是有点不友好。因此网上出现了很多基于各种深度学习框架的YOLO复现版本。近日,就有研究者在GitHub上更新了基于PyTorch的YOLOv4。

Nvida GeForce RTX 2080TI

CUDA10.0

CUDNN7.0

windows 或 linux 系统

python 3.6

DO-Conv (https://arxiv.org/abs/2006.12030) (torch>=1.2)

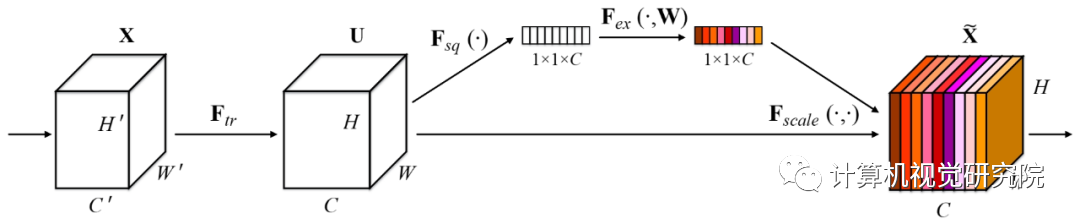

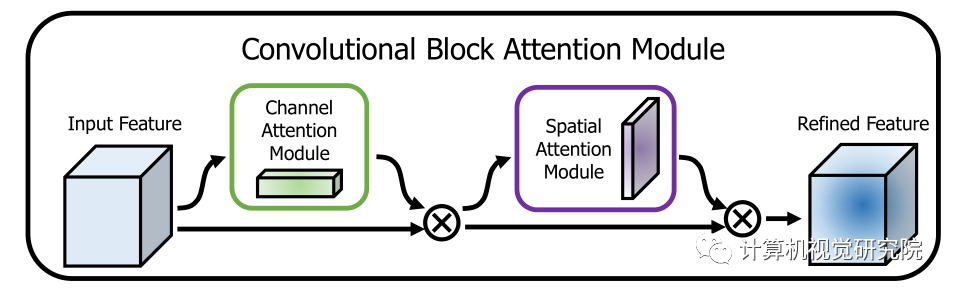

Attention

fp_16 training

Mish

Custom data

Data Augment (RandomHorizontalFlip, RandomCrop, RandomAffine, Resize)

Multi-scale Training (320 to 640)

focal loss

CIOU

Label smooth

Mixup

cosine lr

git clone github.com/argusswift/YOLOv4-PyTorch.git# Download the data.cd $HOME/datawget http://host.robots.ox.ac.uk/pascal/VOC/voc2012/VOCtrainval_11-May-2012.tarwget http://host.robots.ox.ac.uk/pascal/VOC/voc2007/VOCtrainval_06-Nov-2007.tarwget http://host.robots.ox.ac.uk/pascal/VOC/voc2007/VOCtest_06-Nov-2007.tar# Extract the data.tar -xvf VOCtrainval_11-May-2012.tartar -xvf VOCtrainval_06-Nov-2007.tartar -xvf VOCtest_06-Nov-2007.tar

MSCOCO 2017 数据集下载命令:

#step1: download the following data and annotation2017 Train images [118K/18GB]2017 Val images [5K/1GB] 2017 Test images [41K/6GB]2017 Train/Val annotations [241MB]#step2: arrange the data to the following structureCOCO---annotations

将数据集放入目录,更新config/yolov4_config.py中的DATA_PATH参数。

(对于COCO数据集)使用coco_to_voc.py将COCO数据类型转换为VOC数据类型。

转换数据格式:使用utils/voc.py或utils/coco.py将 ]pascal voc*.xml格式(或COCO*.json格式)转换为*.txt格式(Image_path xmin0,ymin0,xmax0,ymax0,class0 xmin1,ymin1,xmax1,ymax1,class1 ...)。

CUDA_VISIBLE_DEVICES=0 nohup python -u train.py --weight_path weight/yolov4.weights --gpu_id 0 > nohup.log 2>&1 &CUDA_VISIBLE_DEVICES=0 nohup python -u train.py --weight_path weight/last.pt --gpu_id 0 > nohup.log 2>&1 &for VOC dataset:CUDA_VISIBLE_DEVICES=0 python3 eval_voc.py --weight_path weight/best.pt --gpu_id 0 --visiual $DATA_TEST --eval --mode detfor COCO dataset:CUDA_VISIBLE_DEVICES=0 python3 eval_coco.py --weight_path weight/best.pt --gpu_id 0 --visiual $DATA_TEST --eval --mode det

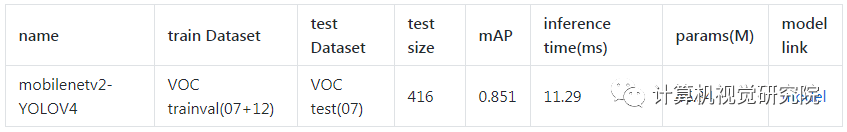

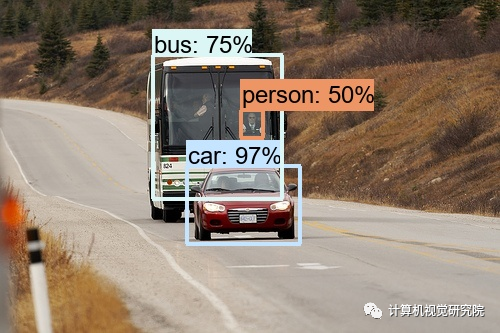

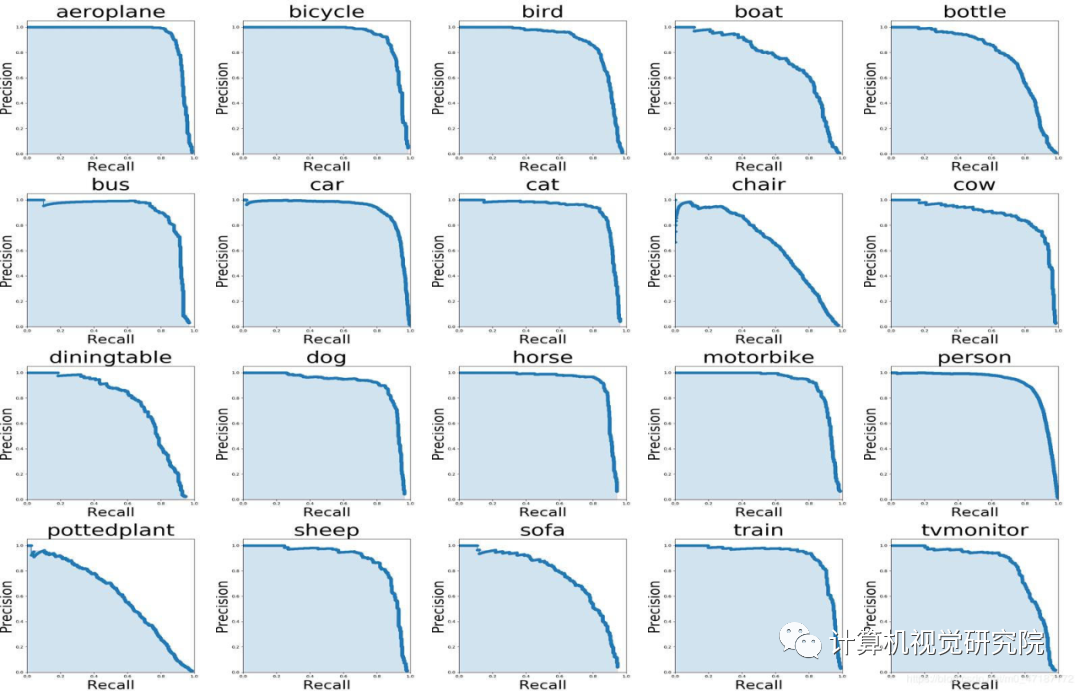

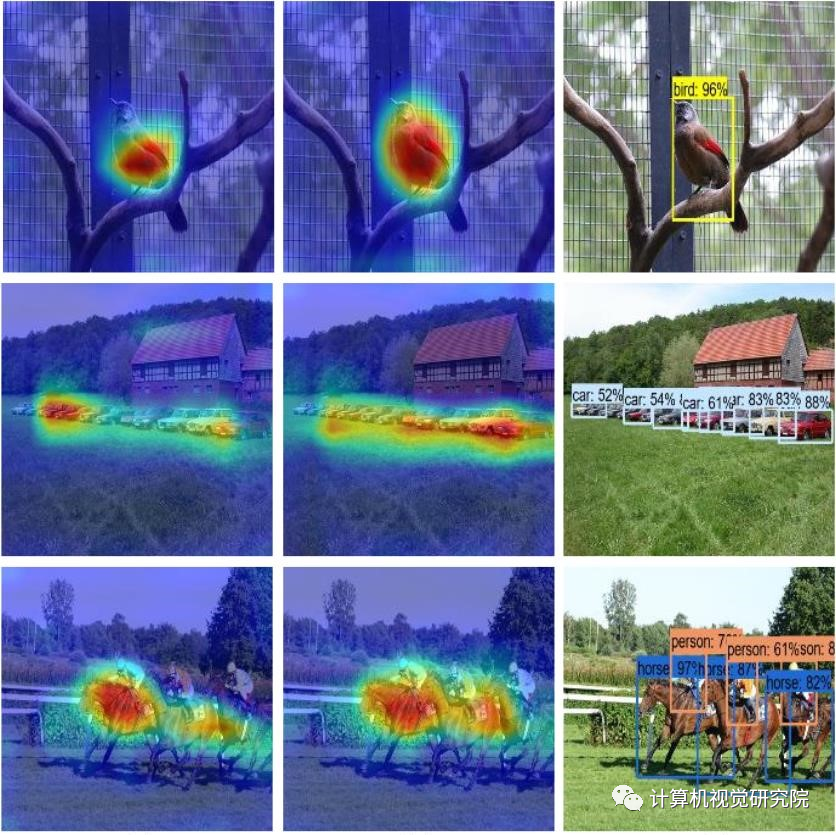

结果可以在output/中查看,如下所示:

for VOC dataset:CUDA_VISIBLE_DEVICES=0 python3 eval_voc.py --weight_path weight/best.pt --gpu_id 0 --visiual $DATA_TEST --eval -

CUDA_VISIBLE_DEVICES=0 python3 eval_coco.py --weight_path weight/best.pt --gpu_id 0 --visiual $DATA_TEST --eval --mode valtype=bboxRunning per image evaluation... DONE (t=0.34s).Accumulating evaluation results... DONE (t=0.08s).Average Precision (AP) @[ IoU=0.50:0.95 | area= all | maxDets=100 ] = 0.438Average Precision (AP) @[ IoU=0.50 | area= all | maxDets=100 ] = 0.607Average Precision (AP) @[ IoU=0.75 | area= all | maxDets=100 ] = 0.469Average Precision (AP) @[ IoU=0.50:0.95 | area= small | maxDets=100 ] = 0.253Average Precision (AP) @[ IoU=0.50:0.95 | area=medium | maxDets=100 ] = 0.486Average Precision (AP) @[ IoU=0.50:0.95 | area= large | maxDets=100 ] = 0.567Average Recall (AR) @[ IoU=0.50:0.95 | area= all | maxDets= 1 ] = 0.342Average Recall (AR) @[ IoU=0.50:0.95 | area= all | maxDets= 10 ] = 0.571Average Recall (AR) @[ IoU=0.50:0.95 | area= all | maxDets=100 ] = 0.632Average Recall (AR) @[ IoU=0.50:0.95 | area= small | maxDets=100 ] = 0.458Average Recall (AR) @[ IoU=0.50:0.95 | area=medium | maxDets=100 ] = 0.691Average Recall (AR) @[ IoU=0.50:0.95 | area= large | maxDets=100 ] = 0.790

for VOC dataset:CUDA_VISIBLE_DEVICES=0 python3 eval_voc.py --weight_path weight/best.pt --gpu_id 0 --visiual $DATA_TEST --evalfor COCO dataset:CUDA_VISIBLE_DEVICES=0 python3 eval_coco.py --weight_path weight/best.pt --gpu_id 0 --visiual $DATA_TEST --eval

✄------------------------------------------------

双一流高校研究生团队创建 ↓

专注于计算机视觉原创并分享相关知识 ☞

闻道有先后,术业有专攻,如是而已 ╮(╯_╰)╭