RTX 3090的深度学习环境配置指南:Pytorch、TensorFlow、Keras

导读

本文介绍了作者使用RTX3090进行深度学习环境配置pytorch、tensorflow、keras等的详细过程及代码。

笔者中山大学研究生,医学生+计科学生的集合体,机器学习爱好者。

最近刚入了3090,发现网上写的各种环境配置相当混乱而且速度很慢。所以自己测了下速度最快的3090配置环境,欢迎补充!

基本环境(整个流程大约需要5分钟甚至更少)

py37或py38cuda11.0cudnn8.0.4tf2.5(tf-nightly)或 tf1.15.4pytorch1.7keras2.3

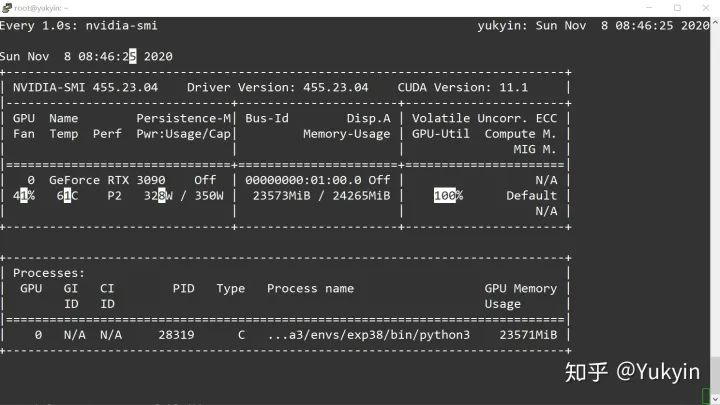

(1)官网下载,安装显卡驱动:

bash NVIDIA-Linux-x86_64-455.23.04.run

(2)安装Anaconda并换源

bash Anaconda3-5.2.0-Linux-x86_64.shvim ~/.bashrcexport PATH=/home/XXX/anaconda3/bin:$PATH(XXX为自己的用户名)(在文件末尾处添加该语句)source ~/.bashrcconda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/main/conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/pkgs/free/conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud/conda-forgeconda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud/msys2/conda config --add channels https://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud/pytorch/conda config --set show_channel_urls yes之后vim ~/.condarc,把defaults删掉

(3)创建虚拟环境,一般用py37或py38(以下都在虚拟环境中操作)

conda create -n exp38 python==3.8conda activate exp38

(4)安装cuda11.0和pytorch1.7(不用再conda install cudatoolkit==11.0)

conda install pytorch torchvision cudatoolkit=11(5)安装cudnn8(因为conda还不支持cudatoolkit=11中下载cudnn)

从https://developer.nvidia.com/rdp/cudnn-download下载cudnn,解压后进入cuda/lib64路径下,把里面所有文件拷入对应虚拟环境(exp38)的lib中

(6)装tf2.5(不要装tensorflow-gpu==2.4.0rc0,会报错'NoneType' object has no attribute 'TFE_MonitoringDeleteBuckets')

pip install tf-nightly-gpu -i http://pypi.douban.com/simple --trusted-host pypi.douban.compip install tf-nightly -i http://pypi.douban.com/simple --trusted-host pypi.douban.com

(7)装tf1.15.4

此处参考这位大佬的tf1.15.4安装步骤

https://blog.csdn.net/wu496963386/article/details/109583045?utm_medium=distribute.wap_relevant.none-task-blog-BlogCommendFromMachineLearnPai2-2.wap_blog_relevant_pic

pip install google_pasta-0.2.0-py3-none-any.whl nvidia_cublas-11.2.1.74-cp36-cp36m-linux_x86_64.whl nvidia_cuda_cupti-11.1.69-cp36-cp36m-linux_x86_64.whl nvidia_cuda_nvcc-11.1.74-cp36-cp36m-linux_x86_64.whl nvidia_cuda_nvrtc-11.1.74-cp36-cp36m-linux_x86_64.whl nvidia_cuda_runtime-11.1.74-cp36-cp36m-linux_x86_64.whl nvidia_cudnn-8.0.4.30-cp36-cp36m-linux_x86_64.whl nvidia_cufft-10.3.0.74-cp36-cp36m-linux_x86_64.whl nvidia_curand-10.2.2.74-cp36-cp36m-linux_x86_64.whl nvidia_cusolver-11.0.0.74-cp36-cp36m-linux_x86_64.whl nvidia_cusparse-11.2.0.275-cp36-cp36m-linux_x86_64.whl nvidia_dali_cuda110-0.26.0-1608709-py3-none-manylinux2014_x86_64.whl nvidia_dali_nvtf_plugin-0.26.0+nv20.10-cp36-cp36m-linux_x86_64.whl nvidia_nccl-2.7.8-cp36-cp36m-linux_x86_64.whl nvidia_tensorrt-7.2.1.4-cp36-none-linux_x86_64.whl tensorflow_estimator-1.15.1-py2.py3-none-any.whl nvidia_tensorboard-1.15.0+nv20.10-py3-none-any.whlnvidia_tensorflow-1.15.4+nv20.10-cp36-cp36m-linux_x86_64.whl -i http://pypi.douban.com/simple --trusted-host pypi.douban.com

(8)装keras2.3

pip install keras==2.3 -i http://pypi.douban.com/simple --trusted-host pypi.douban.com

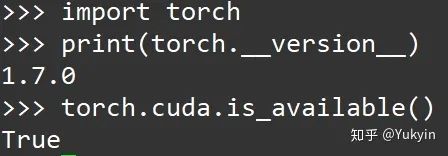

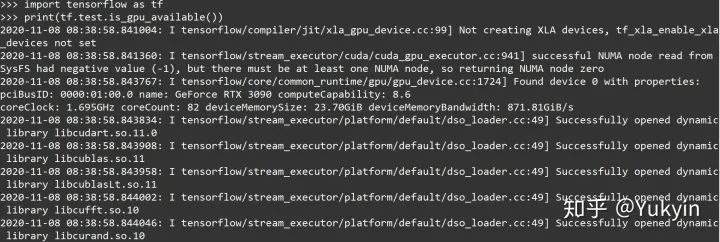

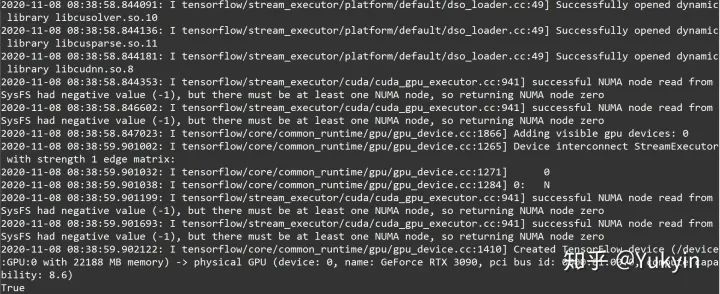

(9)测试(使用cuda10.2也可以测试使用gpu,但貌似不能把数据写入gpu)

pytorch

tensorflow-2.5或1.15.4

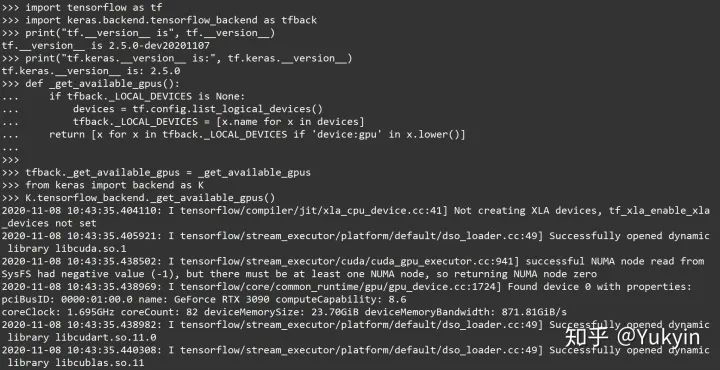

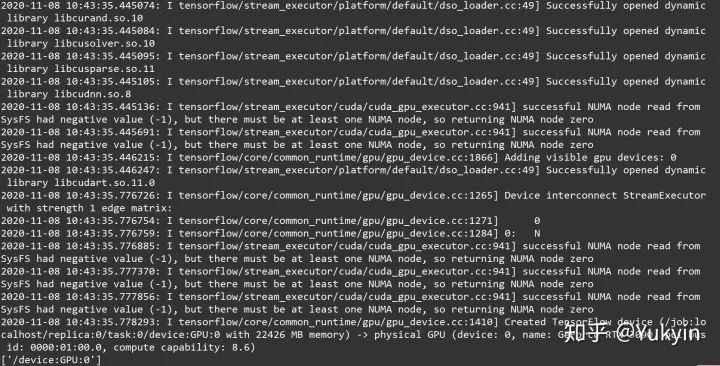

keras(测试需要改部分源码_get_available_gpus())

import tensorflow as tfimport keras.backend.tensorflow_backend as tfbackprint("tf.__version__ is", tf.__version__)print("tf.keras.__version__ is:", tf.keras.__version__)def _get_available_gpus():if tfback._LOCAL_DEVICES is None:devices = tf.config.list_logical_devices()tfback._LOCAL_DEVICES = [x.name for x in devices]return [x for x in tfback._LOCAL_DEVICES if 'device:gpu' in x.lower()]tfback._get_available_gpus = _get_available_gpusfrom keras import backend as KK.tensorflow_backend._get_available_gpus()

后记:实际3090需要cuda11.1,但pytorch和tf目前只支持11.0。而且讲真不需要单独配cuda、cudnn,在虚拟环境里搞就行了。

20210102更新:对tf1.15.4进行测试,实测可用,数据可写入gpu。文章中已补充。

往期精彩: