kafka源码学习:KafkaApis-LEADER_AND_ISR

作者:小兵

来源:SegmentFault 思否社区

每当controller发生状态变更时,都会通过调用sendRequestsToBrokers方法发送leaderAndIsrRequest请求,本文主要介绍kafka服务端处理该请求的逻辑和过程。

LEADER_AND_ISR

整体逻辑流程

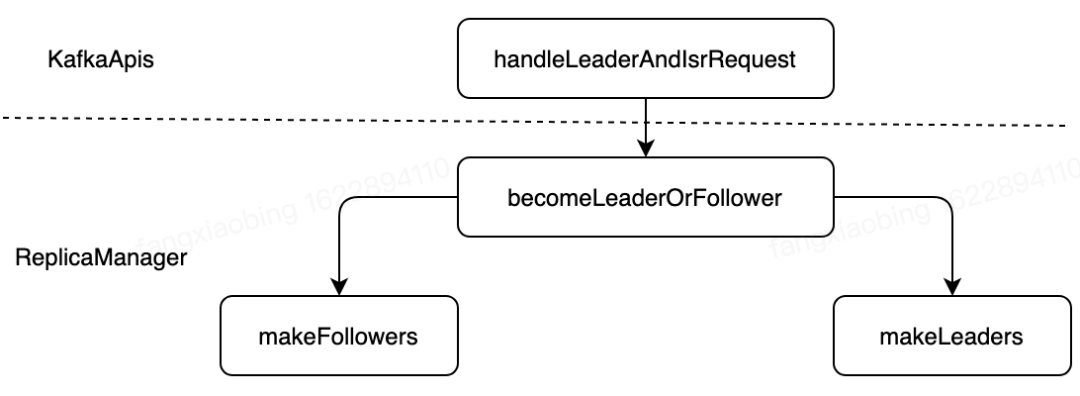

case ApiKeys.LEADER_AND_ISR => handleLeaderAndIsrRequest(request)在server端收到LEADER_AND_ISR请求后,会调用 handleLeaderAndIsrRequest 方法进行处理,该方法的处理流程如图所示:

源码

handleLeaderAndIsrRequest

handleLeaderAndIsrRequest函数的逻辑结果主要分为以下几个部分:

构造callback函数 onLeadershipChange,用来回调coordinator处理新增的leader或者follower节点校验请求权限,如果校验成功调用 replicaManager.becomeLeaderOrFollower(correlationId, leaderAndIsrRequest, metadataCache, onLeadershipChange)进行后续处理【此处该函数的主流程】,否则,直接返回错误码Errors.CLUSTER_AUTHORIZATION_FAILED.code

def handleLeaderAndIsrRequest(request: RequestChannel.Request) {// ensureTopicExists is only for client facing requests// We can't have the ensureTopicExists check here since the controller sends it as an advisory to all brokers so they// stop serving data to clients for the topic being deletedval correlationId = request.header.correlationIdval leaderAndIsrRequest = request.body.asInstanceOf[LeaderAndIsrRequest]try {def onLeadershipChange(updatedLeaders: Iterable[Partition], updatedFollowers: Iterable[Partition]) {// for each new leader or follower, call coordinator to handle consumer group migration.// this callback is invoked under the replica state change lock to ensure proper order of// leadership changesupdatedLeaders.foreach { partition =>if (partition.topic == Topic.GroupMetadataTopicName)coordinator.handleGroupImmigration(partition.partitionId)}updatedFollowers.foreach { partition =>if (partition.topic == Topic.GroupMetadataTopicName)coordinator.handleGroupEmigration(partition.partitionId)}}val leaderAndIsrResponse =if (authorize(request.session, ClusterAction, Resource.ClusterResource)) {val result = replicaManager.becomeLeaderOrFollower(correlationId, leaderAndIsrRequest, metadataCache, onLeadershipChange)new LeaderAndIsrResponse(result.errorCode, result.responseMap.mapValues(new JShort(_)).asJava)} else {val result = leaderAndIsrRequest.partitionStates.asScala.keys.map((_, new JShort(Errors.CLUSTER_AUTHORIZATION_FAILED.code))).toMapnew LeaderAndIsrResponse(Errors.CLUSTER_AUTHORIZATION_FAILED.code, result.asJava)}requestChannel.sendResponse(new Response(request, leaderAndIsrResponse))} catch {case e: KafkaStorageException =>fatal("Disk error during leadership change.", e)Runtime.getRuntime.halt(1)}}

becomeLeaderOrFollower

ReplicaManager的主要工作有以下几个部分,具体代码位置见中文注释:

校验controller epoch是否合规,只处理比自己epoch大且本地有副本的tp的请求 调用 makeLeaders和makeFollowers方法构造新增的leader partition和follower partition【此处为主要逻辑,后面小结详细介绍】如果是第一次收到请求,启动定时更新hw的线程 停掉空的Fetcher线程 调用回调函数,coordinator处理新增的leader partition和follower partition

def becomeLeaderOrFollower(correlationId: Int,leaderAndISRRequest: LeaderAndIsrRequest,metadataCache: MetadataCache,onLeadershipChange: (Iterable[Partition], Iterable[Partition]) => Unit): BecomeLeaderOrFollowerResult = {leaderAndISRRequest.partitionStates.asScala.foreach { case (topicPartition, stateInfo) =>stateChangeLogger.trace("Broker %d received LeaderAndIsr request %s correlation id %d from controller %d epoch %d for partition [%s,%d]".format(localBrokerId, stateInfo, correlationId,leaderAndISRRequest.controllerId, leaderAndISRRequest.controllerEpoch, topicPartition.topic, topicPartition.partition))}//主要代码,构造返回结果replicaStateChangeLock synchronized {val responseMap = new mutable.HashMap[TopicPartition, Short]//如果controller epoch不正确,直接返回Errors.STALE_CONTROLLER_EPOCH.code错误码if (leaderAndISRRequest.controllerEpoch < controllerEpoch) {stateChangeLogger.warn(("Broker %d ignoring LeaderAndIsr request from controller %d with correlation id %d since " +"its controller epoch %d is old. Latest known controller epoch is %d").format(localBrokerId, leaderAndISRRequest.controllerId,correlationId, leaderAndISRRequest.controllerEpoch, controllerEpoch))BecomeLeaderOrFollowerResult(responseMap, Errors.STALE_CONTROLLER_EPOCH.code)} else {val controllerId = leaderAndISRRequest.controllerIdcontrollerEpoch = leaderAndISRRequest.controllerEpoch// First check partition's leader epoch//校验所有的partition信息,分为以下3种情况://1. 本地不包含该partition,返回Errors.UNKNOWN_TOPIC_OR_PARTITION.code//2. 本地包含该partition,controller epoch比本地epoch大,信息正确//3. controller epoch比本地epoch小,返回Errors.STALE_CONTROLLER_EPOCH.codeval partitionState = new mutable.HashMap[Partition, PartitionState]()leaderAndISRRequest.partitionStates.asScala.foreach { case (topicPartition, stateInfo) =>val partition = getOrCreatePartition(topicPartition)val partitionLeaderEpoch = partition.getLeaderEpoch// If the leader epoch is valid record the epoch of the controller that made the leadership decision.// This is useful while updating the isr to maintain the decision maker controller's epoch in the zookeeper pathif (partitionLeaderEpoch < stateInfo.leaderEpoch) {if(stateInfo.replicas.contains(localBrokerId))partitionState.put(partition, stateInfo)else {stateChangeLogger.warn(("Broker %d ignoring LeaderAndIsr request from controller %d with correlation id %d " +"epoch %d for partition [%s,%d] as itself is not in assigned replica list %s").format(localBrokerId, controllerId, correlationId, leaderAndISRRequest.controllerEpoch,topicPartition.topic, topicPartition.partition, stateInfo.replicas.asScala.mkString(",")))responseMap.put(topicPartition, Errors.UNKNOWN_TOPIC_OR_PARTITION.code)}} else {// Otherwise record the error code in responsestateChangeLogger.warn(("Broker %d ignoring LeaderAndIsr request from controller %d with correlation id %d " +"epoch %d for partition [%s,%d] since its associated leader epoch %d is not higher than the current leader epoch %d").format(localBrokerId, controllerId, correlationId, leaderAndISRRequest.controllerEpoch,topicPartition.topic, topicPartition.partition, stateInfo.leaderEpoch, partitionLeaderEpoch))responseMap.put(topicPartition, Errors.STALE_CONTROLLER_EPOCH.code)}}//处理leader&follower副本,构造partitionsBecomeLeader和partitionsBecomeFollower供callback处理(coordinator处理)val partitionsTobeLeader = partitionState.filter { case (_, stateInfo) =>stateInfo.leader == localBrokerId}val partitionsToBeFollower = partitionState -- partitionsTobeLeader.keysval partitionsBecomeLeader = if (partitionsTobeLeader.nonEmpty)// 主要调用makeLeaders(controllerId, controllerEpoch, partitionsTobeLeader, correlationId, responseMap)elseSet.empty[Partition]val partitionsBecomeFollower = if (partitionsToBeFollower.nonEmpty)// 主要调用makeFollowers(controllerId, controllerEpoch, partitionsToBeFollower, correlationId, responseMap, metadataCache)elseSet.empty[Partition]// we initialize highwatermark thread after the first leaderisrrequest. This ensures that all the partitions// have been completely populated before starting the checkpointing there by avoiding weird race conditions// 在第一次收到收到请求后,就会启动Scheduler,定时更新hw checkpointif (!hwThreadInitialized) {startHighWaterMarksCheckPointThread()hwThreadInitialized = true}// 因为上面更新了元信息,此处检查停掉不必要的Fetcher线程replicaFetcherManager.shutdownIdleFetcherThreads()// 回调onLeadershipChange(partitionsBecomeLeader, partitionsBecomeFollower)BecomeLeaderOrFollowerResult(responseMap, Errors.NONE.code)}}}

makeLeaders

停止这些partition的follower线程 更新这些partition的metadata cache 构造新增leader集合

private def makeLeaders(controllerId: Int,epoch: Int,partitionState: Map[Partition, PartitionState],correlationId: Int,responseMap: mutable.Map[TopicPartition, Short]): Set[Partition] = {// 构造becomeLeaderOrFollower需要的返回结果for (partition <- partitionState.keys)responseMap.put(partition.topicPartition, Errors.NONE.code)val partitionsToMakeLeaders: mutable.Set[Partition] = mutable.Set()try {// First stop fetchers for all the partitions// 停止Fetcher线程replicaFetcherManager.removeFetcherForPartitions(partitionState.keySet.map(_.topicPartition))// Update the partition information to be the leader// 构造新增leader partition集合partitionState.foreach{ case (partition, partitionStateInfo) =>if (partition.makeLeader(controllerId, partitionStateInfo, correlationId))partitionsToMakeLeaders += partitionelsestateChangeLogger.info(("Broker %d skipped the become-leader state change after marking its partition as leader with correlation id %d from " +"controller %d epoch %d for partition %s since it is already the leader for the partition.").format(localBrokerId, correlationId, controllerId, epoch, partition.topicPartition))}}} catch {case e: Throwable =>partitionState.keys.foreach { partition =>val errorMsg = ("Error on broker %d while processing LeaderAndIsr request correlationId %d received from controller %d" +" epoch %d for partition %s").format(localBrokerId, correlationId, controllerId, epoch, partition.topicPartition)stateChangeLogger.error(errorMsg, e)}// Re-throw the exception for it to be caught in KafkaApisthrow e}partitionsToMakeLeaders}

partition.makeLeader(controllerId, partitionStateInfo, correlationId)会进行元信息的处理,并更新hw,此方法会调用maybeIncrementLeaderHW函数,该函数会尝试追赶hw:如果其他副本落后leader不太远,并且比之前的hw大,会延缓hw增长速度,尽可能让其他副本进队。

def makeLeader(controllerId: Int, partitionStateInfo: PartitionState, correlationId: Int): Boolean = {val (leaderHWIncremented, isNewLeader) = inWriteLock(leaderIsrUpdateLock) {val allReplicas = partitionStateInfo.replicas.asScala.map(_.toInt)// record the epoch of the controller that made the leadership decision. This is useful while updating the isr// to maintain the decision maker controller's epoch in the zookeeper pathcontrollerEpoch = partitionStateInfo.controllerEpoch// add replicas that are new// 构造新ISRallReplicas.foreach(replica => getOrCreateReplica(replica))val newInSyncReplicas = partitionStateInfo.isr.asScala.map(r => getOrCreateReplica(r)).toSet// remove assigned replicas that have been removed by the controller// 移除所有不在新ISR中的副本(assignedReplicas.map(_.brokerId) -- allReplicas).foreach(removeReplica)inSyncReplicas = newInSyncReplicasleaderEpoch = partitionStateInfo.leaderEpochzkVersion = partitionStateInfo.zkVersion//是否第一次成为该partition的leaderval isNewLeader =if (leaderReplicaIdOpt.isDefined && leaderReplicaIdOpt.get == localBrokerId) {false} else {leaderReplicaIdOpt = Some(localBrokerId)true}val leaderReplica = getReplica().getval curLeaderLogEndOffset = leaderReplica.logEndOffset.messageOffsetval curTimeMs = time.milliseconds// initialize lastCaughtUpTime of replicas as well as their lastFetchTimeMs and lastFetchLeaderLogEndOffset.//新leader初始化(assignedReplicas - leaderReplica).foreach { replica =>val lastCaughtUpTimeMs = if (inSyncReplicas.contains(replica)) curTimeMs else 0Lreplica.resetLastCaughtUpTime(curLeaderLogEndOffset, curTimeMs, lastCaughtUpTimeMs)}// we may need to increment high watermark since ISR could be down to 1if (isNewLeader) {// construct the high watermark metadata for the new leader replicaleaderReplica.convertHWToLocalOffsetMetadata()// reset log end offset for remote replicasassignedReplicas.filter(_.brokerId != localBrokerId).foreach(_.updateLogReadResult(LogReadResult.UnknownLogReadResult))}// 尝试追赶hw,如果其他副本落后leader不太远,并且比之前的hw大,会延缓hw增长速度,尽可能让其他副本进队(maybeIncrementLeaderHW(leaderReplica), isNewLeader)}// some delayed operations may be unblocked after HW changed// hw更新后会处理一些requestif (leaderHWIncremented)tryCompleteDelayedRequests()isNewLeader}

makeFollowers

处理新增的follower partition

从leaderpartition集合中移除这些partition

标记为follower,阻止producer请求

移除Fetcher线程

根据hw truncate这些partition的本地日志

清理producer和fetch请求

如果没有宕机,从新的leader fetch数据

private def makeFollowers(controllerId: Int,epoch: Int,partitionState: Map[Partition, PartitionState],correlationId: Int,responseMap: mutable.Map[TopicPartition, Short],metadataCache: MetadataCache) : Set[Partition] = {partitionState.keys.foreach { partition =>stateChangeLogger.trace(("Broker %d handling LeaderAndIsr request correlationId %d from controller %d epoch %d " +"starting the become-follower transition for partition %s").format(localBrokerId, correlationId, controllerId, epoch, partition.topicPartition))}// 构造becomeLeaderOrFollower需要的返回结果for (partition <- partitionState.keys)responseMap.put(partition.topicPartition, Errors.NONE.code)val partitionsToMakeFollower: mutable.Set[Partition] = mutable.Set()try {// TODO: Delete leaders from LeaderAndIsrRequestpartitionState.foreach{ case (partition, partitionStateInfo) =>val newLeaderBrokerId = partitionStateInfo.leadermetadataCache.getAliveBrokers.find(_.id == newLeaderBrokerId) match {// Only change partition state when the leader is availablecase Some(_) =>// 构造返回结果if (partition.makeFollower(controllerId, partitionStateInfo, correlationId))partitionsToMakeFollower += partitionelsestateChangeLogger.info(("Broker %d skipped the become-follower state change after marking its partition as follower with correlation id %d from " +"controller %d epoch %d for partition %s since the new leader %d is the same as the old leader").format(localBrokerId, correlationId, controllerId, partitionStateInfo.controllerEpoch,partition.topicPartition, newLeaderBrokerId))case None =>// The leader broker should always be present in the metadata cache.// If not, we should record the error message and abort the transition process for this partitionstateChangeLogger.error(("Broker %d received LeaderAndIsrRequest with correlation id %d from controller" +" %d epoch %d for partition %s but cannot become follower since the new leader %d is unavailable.").format(localBrokerId, correlationId, controllerId, partitionStateInfo.controllerEpoch,partition.topicPartition, newLeaderBrokerId))// Create the local replica even if the leader is unavailable. This is required to ensure that we include// the partition's high watermark in the checkpoint file (see KAFKA-1647)partition.getOrCreateReplica()}}//移除Fetcher线程replicaFetcherManager.removeFetcherForPartitions(partitionsToMakeFollower.map(_.topicPartition))//根据新hw进行truncatelogManager.truncateTo(partitionsToMakeFollower.map { partition =>(partition.topicPartition, partition.getOrCreateReplica().highWatermark.messageOffset)}.toMap)//hw更新,尝试处理请求partitionsToMakeFollower.foreach { partition =>val topicPartitionOperationKey = new TopicPartitionOperationKey(partition.topicPartition)tryCompleteDelayedProduce(topicPartitionOperationKey)tryCompleteDelayedFetch(topicPartitionOperationKey)}if (isShuttingDown.get()) {partitionsToMakeFollower.foreach { partition =>stateChangeLogger.trace(("Broker %d skipped the adding-fetcher step of the become-follower state change with correlation id %d from " +"controller %d epoch %d for partition %s since it is shutting down").format(localBrokerId, correlationId,controllerId, epoch, partition.topicPartition))}}else {// we do not need to check if the leader exists again since this has been done at the beginning of this process// 重置fetch位置,加入Fetcherval partitionsToMakeFollowerWithLeaderAndOffset = partitionsToMakeFollower.map(partition =>partition.topicPartition -> BrokerAndInitialOffset(metadataCache.getAliveBrokers.find(_.id == partition.leaderReplicaIdOpt.get).get.getBrokerEndPoint(config.interBrokerListenerName),partition.getReplica().get.logEndOffset.messageOffset)).toMapreplicaFetcherManager.addFetcherForPartitions(partitionsToMakeFollowerWithLeaderAndOffset)}} catch {case e: Throwable =>val errorMsg = ("Error on broker %d while processing LeaderAndIsr request with correlationId %d received from controller %d " +"epoch %d").format(localBrokerId, correlationId, controllerId, epoch)stateChangeLogger.error(errorMsg, e)// Re-throw the exception for it to be caught in KafkaApisthrow e}partitionsToMakeFollower}

原文链接:https://fxbing.github.io/2021/06/05/kafka源码学习:KafkaApis-LEADER-AND-ISR/

本文源码基于kafka 0.10.2版本

评论