Python 中的极端梯度增强(XGBoost)集成

极端梯度提升是随机梯度提升集成算法的高效开源实现。 如何使用scikit-learn API开发用于分类和回归的XGBoost集成。 如何探索XGBoost模型超参数对模型性能的影响。

极端梯度提升算法 XGBoost Scikit-Learn API XGBoost分类集成 XGBoost回归集成 XGBoost超参数 探索树木数量 探索树深 探索学习率 探索样品数量 探索特征数量

https://machinelearningmastery.com/gentle-introduction-gradient-boosting-algorithm-machine-learning/sudo pip install xgboost

# check xgboost version

import xgboost

print(xgboost.__version__)

1.1.1

sudo pip install xgboost==1.0.1

FutureWarning: pandas.util.testing is deprecated. Use the functions in the public API at pandas.testing instead.

https://xgboost.readthedocs.io/en/latest/build.html

make_classification()函数创建具有1,000个示例和20个输入功能的综合二进制分类问题。# test classification dataset

from sklearn.datasets import make_classification

# define dataset

X, y = make_classification(n_samples=1000, n_features=20, n_informative=15, n_redundant=5, random_state=7)

# summarize the dataset

print(X.shape, y.shape)

(1000, 20) (1000,)

# evaluate xgboost algorithm for classification

from numpy import mean

from numpy import std

from sklearn.datasets import make_classification

from sklearn.model_selection import cross_val_score

from sklearn.model_selection import RepeatedStratifiedKFold

from xgboost import XGBClassifier

# define dataset

X, y = make_classification(n_samples=1000, n_features=20, n_informative=15, n_redundant=5, random_state=7)

# define the model

model = XGBClassifier()

# evaluate the model

cv = RepeatedStratifiedKFold(n_splits=10, n_repeats=3, random_state=1)

n_scores = cross_val_score(model, X, y, scoring='accuracy', cv=cv, n_jobs=-1)

# report performance

print('Accuracy: %.3f (%.3f)' % (mean(n_scores), std(n_scores)))

Accuracy: 0.925 (0.028)

# make predictions using xgboost for classification

from numpy import asarray

from sklearn.datasets import make_classification

from xgboost import XGBClassifier

# define dataset

X, y = make_classification(n_samples=1000, n_features=20, n_informative=15, n_redundant=5, random_state=7)

# define the model

model = XGBClassifier()

# fit the model on the whole dataset

model.fit(X, y)

# make a single prediction

row = [0.2929949,-4.21223056,-1.288332,-2.17849815,-0.64527665,2.58097719,0.28422388,-7.1827928,-1.91211104,2.73729512,0.81395695,3.96973717,-2.66939799,3.34692332,4.19791821,0.99990998,-0.30201875,-4.43170633,-2.82646737,0.44916808]

row = asarray([row])

yhat = model.predict(row)

print('Predicted Class: %d' % yhat[0])

Predicted Class: 1

make_regression()函数创建具有1000个示例和20个输入要素的综合回归问题。下面列出了完整的示例。# test regression dataset

from sklearn.datasets import make_regression

# define dataset

X, y = make_regression(n_samples=1000, n_features=20, n_informative=15, noise=0.1, random_state=7)

# summarize the dataset

print(X.shape, y.shape)

(1000, 20) (1000,)

# evaluate xgboost ensemble for regression

from numpy import mean

from numpy import std

from sklearn.datasets import make_regression

from sklearn.model_selection import cross_val_score

from sklearn.model_selection import RepeatedKFold

from xgboost import XGBRegressor

# define dataset

X, y = make_regression(n_samples=1000, n_features=20, n_informative=15, noise=0.1, random_state=7)

# define the model

model = XGBRegressor()

# evaluate the model

cv = RepeatedKFold(n_splits=10, n_repeats=3, random_state=1)

n_scores = cross_val_score(model, X, y, scoring='neg_mean_absolute_error', cv=cv, n_jobs=-1, error_score='raise')

# report performance

print('MAE: %.3f (%.3f)' % (mean(n_scores), std(n_scores)))

MAE: -76.447 (3.859)

prepare()函数对新数据进行预测。与分类一样,单行数据必须以NumPy数组格式表示为二维矩阵。下面的示例在我们的回归数据集中展示了这一点。# gradient xgboost for making predictions for regression

from numpy import asarray

from sklearn.datasets import make_regression

from xgboost import XGBRegressor

# define dataset

X, y = make_regression(n_samples=1000, n_features=20, n_informative=15, noise=0.1, random_state=7)

# define the model

model = XGBRegressor()

# fit the model on the whole dataset

model.fit(X, y)

# make a single prediction

row = [0.20543991,-0.97049844,-0.81403429,-0.23842689,-0.60704084,-0.48541492,0.53113006,2.01834338,-0.90745243,-1.85859731,-1.02334791,-0.6877744,0.60984819,-0.70630121,-1.29161497,1.32385441,1.42150747,1.26567231,2.56569098,-0.11154792]

row = asarray([row])

yhat = model.predict(row)

print('Prediction: %d' % yhat[0])

Prediction: 50

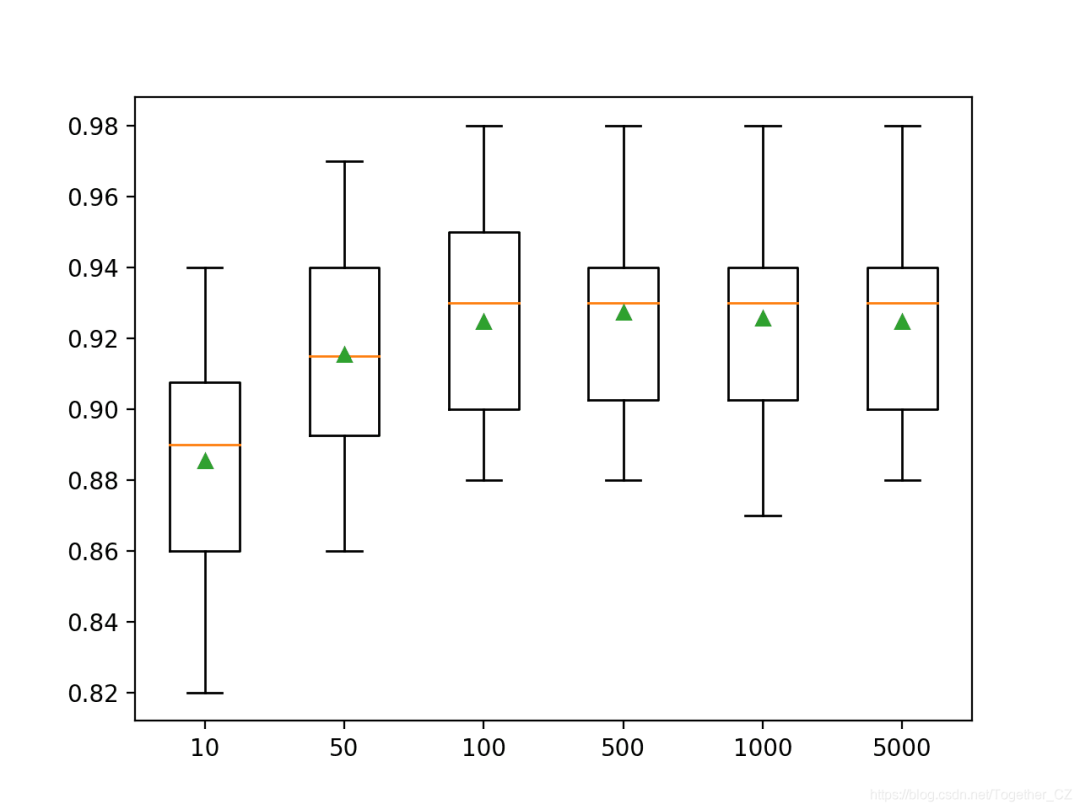

n_estimators”参数设置树的数量,默认为100。下面的示例探讨值在10到5,000之间的树木数量的影响。# explore xgboost number of trees effect on performance

from numpy import mean

from numpy import std

from sklearn.datasets import make_classification

from sklearn.model_selection import cross_val_score

from sklearn.model_selection import RepeatedStratifiedKFold

from xgboost import XGBClassifier

from matplotlib import pyplot

# get the dataset

def get_dataset():

X, y = make_classification(n_samples=1000, n_features=20, n_informative=15, n_redundant=5, random_state=7)

return X, y

# get a list of models to evaluate

def get_models():

models = dict()

trees = [10, 50, 100, 500, 1000, 5000]

for n in trees:

models[str(n)] = XGBClassifier(n_estimators=n)

return models

# evaluate a give model using cross-validation

def evaluate_model(model):

cv = RepeatedStratifiedKFold(n_splits=10, n_repeats=3, random_state=1)

scores = cross_val_score(model, X, y, scoring='accuracy', cv=cv, n_jobs=-1)

return scores

# define dataset

X, y = get_dataset()

# get the models to evaluate

models = get_models()

# evaluate the models and store results

results, names = list(), list()

for name, model in models.items():

scores = evaluate_model(model)

results.append(scores)

names.append(name)

print('>%s %.3f (%.3f)' % (name, mean(scores), std(scores)))

# plot model performance for comparison

pyplot.boxplot(results, labels=names, showmeans=True)

pyplot.show()

>10 0.885 (0.029)

>50 0.915 (0.029)

>100 0.925 (0.028)

>500 0.927 (0.028)

>1000 0.926 (0.028)

>5000 0.925 (0.027)

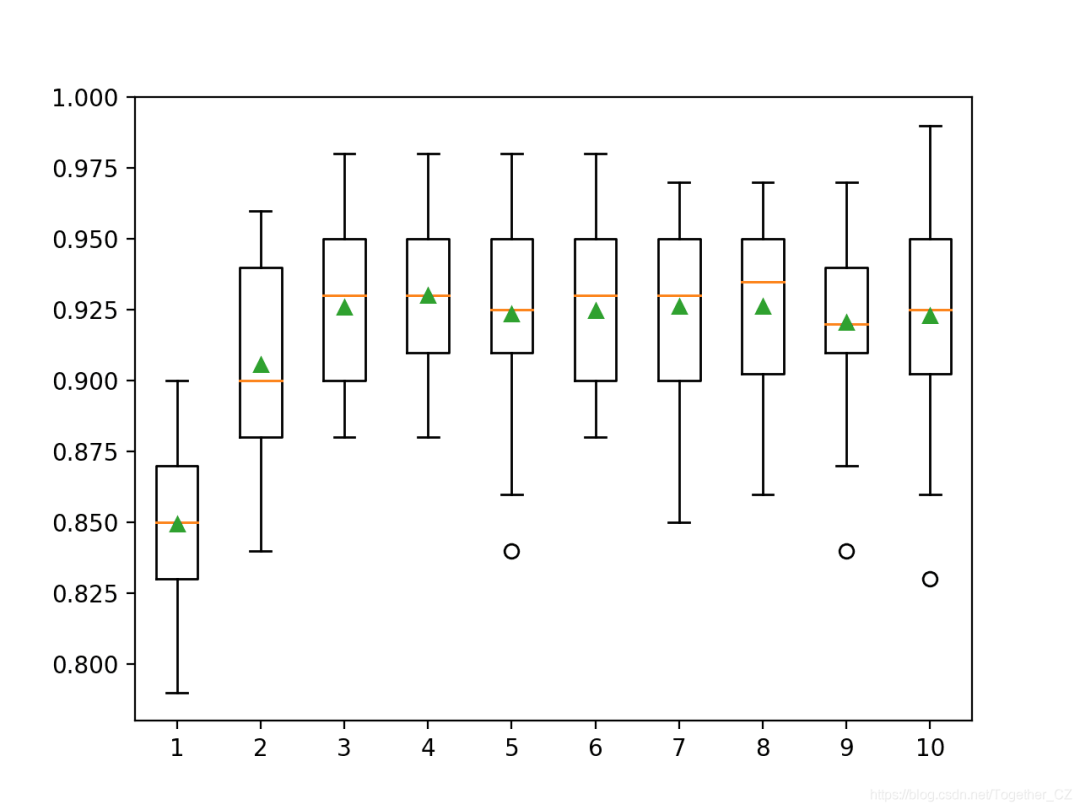

max_depth”参数控制,默认为6。下面的示例探讨了1到10之间的树深及其对模型性能的影响。# explore xgboost tree depth effect on performance

from numpy import mean

from numpy import std

from sklearn.datasets import make_classification

from sklearn.model_selection import cross_val_score

from sklearn.model_selection import RepeatedStratifiedKFold

from xgboost import XGBClassifier

from matplotlib import pyplot

# get the dataset

def get_dataset():

X, y = make_classification(n_samples=1000, n_features=20, n_informative=15, n_redundant=5, random_state=7)

return X, y

# get a list of models to evaluate

def get_models():

models = dict()

for i in range(1,11):

models[str(i)] = XGBClassifier(max_depth=i)

return models

# evaluate a give model using cross-validation

def evaluate_model(model):

cv = RepeatedStratifiedKFold(n_splits=10, n_repeats=3, random_state=1)

scores = cross_val_score(model, X, y, scoring='accuracy', cv=cv, n_jobs=-1)

return scores

# define dataset

X, y = get_dataset()

# get the models to evaluate

models = get_models()

# evaluate the models and store results

results, names = list(), list()

for name, model in models.items():

scores = evaluate_model(model)

results.append(scores)

names.append(name)

print('>%s %.3f (%.3f)' % (name, mean(scores), std(scores)))

# plot model performance for comparison

pyplot.boxplot(results, labels=names, showmeans=True)

pyplot.show()

>1 0.849 (0.028)

>2 0.906 (0.032)

>3 0.926 (0.027)

>4 0.930 (0.027)

>5 0.924 (0.031)

>6 0.925 (0.028)

>7 0.926 (0.030)

>8 0.926 (0.029)

>9 0.921 (0.032)

>10 0.923 (0.035)

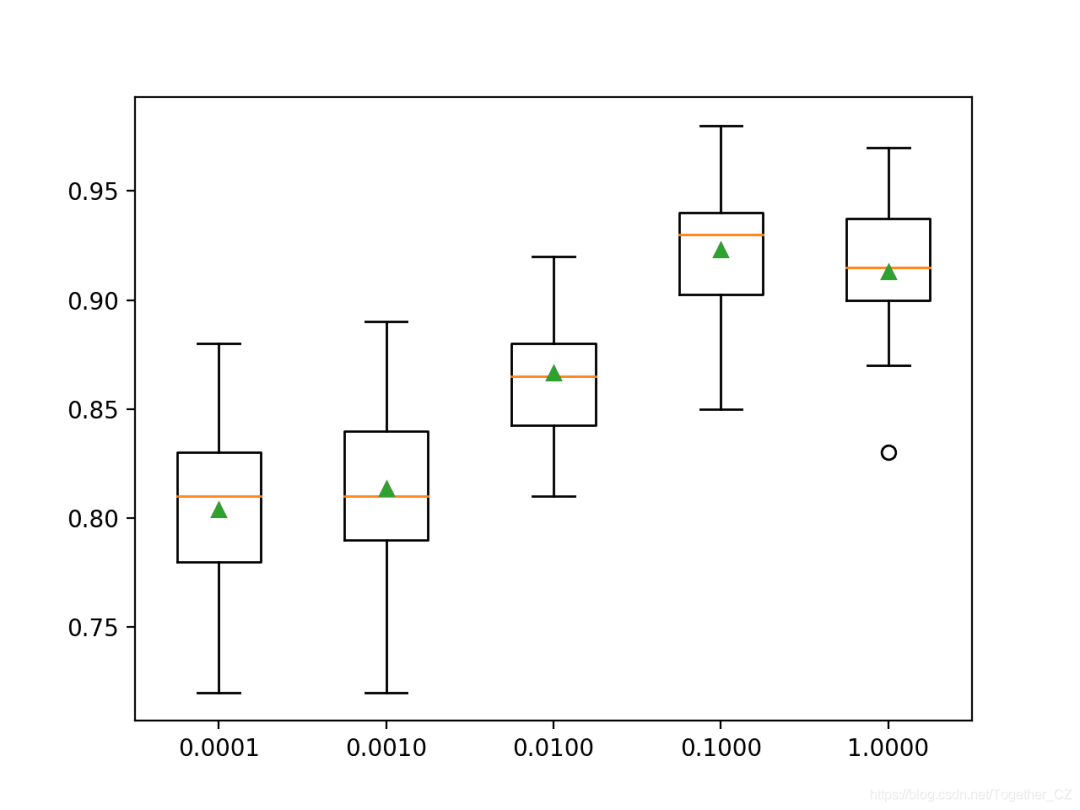

# explore xgboost learning rate effect on performance

from numpy import mean

from numpy import std

from sklearn.datasets import make_classification

from sklearn.model_selection import cross_val_score

from sklearn.model_selection import RepeatedStratifiedKFold

from xgboost import XGBClassifier

from matplotlib import pyplot

# get the dataset

def get_dataset():

X, y = make_classification(n_samples=1000, n_features=20, n_informative=15, n_redundant=5, random_state=7)

return X, y

# get a list of models to evaluate

def get_models():

models = dict()

rates = [0.0001, 0.001, 0.01, 0.1, 1.0]

for r in rates:

key = '%.4f' % r

models[key] = XGBClassifier(eta=r)

return models

# evaluate a give model using cross-validation

def evaluate_model(model):

cv = RepeatedStratifiedKFold(n_splits=10, n_repeats=3, random_state=1)

scores = cross_val_score(model, X, y, scoring='accuracy', cv=cv, n_jobs=-1)

return scores

# define dataset

X, y = get_dataset()

# get the models to evaluate

models = get_models()

# evaluate the models and store results

results, names = list(), list()

for name, model in models.items():

scores = evaluate_model(model)

results.append(scores)

names.append(name)

print('>%s %.3f (%.3f)' % (name, mean(scores), std(scores)))

# plot model performance for comparison

pyplot.boxplot(results, labels=names, showmeans=True)

pyplot.show()

>0.0001 0.804 (0.039)

>0.0010 0.814 (0.037)

>0.0100 0.867 (0.027)

>0.1000 0.923 (0.030)

>1.0000 0.913 (0.030)

# explore xgboost subsample ratio effect on performance

from numpy import arange

from numpy import mean

from numpy import std

from sklearn.datasets import make_classification

from sklearn.model_selection import cross_val_score

from sklearn.model_selection import RepeatedStratifiedKFold

from xgboost import XGBClassifier

from matplotlib import pyplot

# get the dataset

def get_dataset():

X, y = make_classification(n_samples=1000, n_features=20, n_informative=15, n_redundant=5, random_state=7)

return X, y

# get a list of models to evaluate

def get_models():

models = dict()

for i in arange(0.1, 1.1, 0.1):

key = '%.1f' % i

models[key] = XGBClassifier(subsample=i)

return models

# evaluate a give model using cross-validation

def evaluate_model(model):

cv = RepeatedStratifiedKFold(n_splits=10, n_repeats=3, random_state=1)

scores = cross_val_score(model, X, y, scoring='accuracy', cv=cv, n_jobs=-1)

return scores

# define dataset

X, y = get_dataset()

# get the models to evaluate

models = get_models()

# evaluate the models and store results

results, names = list(), list()

for name, model in models.items():

scores = evaluate_model(model)

results.append(scores)

names.append(name)

print('>%s %.3f (%.3f)' % (name, mean(scores), std(scores)))

# plot model performance for comparison

pyplot.boxplot(results, labels=names, showmeans=True)

pyplot.show()

>0.1 0.876 (0.027)

>0.2 0.912 (0.033)

>0.3 0.917 (0.032)

>0.4 0.925 (0.026)

>0.5 0.928 (0.027)

>0.6 0.926 (0.024)

>0.7 0.925 (0.031)

>0.8 0.928 (0.028)

>0.9 0.928 (0.025)

>1.0 0.925 (0.028)

colsample_bytree”参数指定,并且默认为训练数据集中的所有特征,例如 100%或1.0的值。您还可以为每个拆分采样列,这由“ colsample_bylevel”参数控制,但是在此我们将不讨论此超参数。下面的示例探讨了特征数量对模型性能的影响,比率从10%到100%以10%的增量变化。# explore xgboost column ratio per tree effect on performance

from numpy import arange

from numpy import mean

from numpy import std

from sklearn.datasets import make_classification

from sklearn.model_selection import cross_val_score

from sklearn.model_selection import RepeatedStratifiedKFold

from xgboost import XGBClassifier

from matplotlib import pyplot

# get the dataset

def get_dataset():

X, y = make_classification(n_samples=1000, n_features=20, n_informative=15, n_redundant=5, random_state=7)

return X, y

# get a list of models to evaluate

def get_models():

models = dict()

for i in arange(0.1, 1.1, 0.1):

key = '%.1f' % i

models[key] = XGBClassifier(colsample_bytree=i)

return models

# evaluate a give model using cross-validation

def evaluate_model(model):

cv = RepeatedStratifiedKFold(n_splits=10, n_repeats=3, random_state=1)

scores = cross_val_score(model, X, y, scoring='accuracy', cv=cv, n_jobs=-1)

return scores

# define dataset

X, y = get_dataset()

# get the models to evaluate

models = get_models()

# evaluate the models and store results

results, names = list(), list()

for name, model in models.items():

scores = evaluate_model(model)

results.append(scores)

names.append(name)

print('>%s %.3f (%.3f)' % (name, mean(scores), std(scores)))

# plot model performance for comparison

pyplot.boxplot(results, labels=names, showmeans=True)

pyplot.show()

>0.1 0.861 (0.033)

>0.2 0.906 (0.027)

>0.3 0.923 (0.029)

>0.4 0.917 (0.029)

>0.5 0.928 (0.030)

>0.6 0.929 (0.031)

>0.7 0.924 (0.027)

>0.8 0.931 (0.025)

>0.9 0.927 (0.033)

>1.0 0.925 (0.028)

创建箱须图以分配每个配置的列比率的准确性得分。

我们可以看到提高模型性能的总体趋势可能达到60%的峰值并保持一定水平。

作者:沂水寒城,CSDN博客专家,个人研究方向:机器学习、深度学习、NLP、CV

Blog: http://yishuihancheng.blog.csdn.net

赞 赏 作 者

更多阅读

特别推荐

点击下方阅读原文加入社区会员

评论