使用 Prometheus 监控 Harbor

你好!我是李大白,今天分享的是基于Prometheus监控harbor服务。

你好!我是李大白,今天分享的是基于Prometheus监控harbor服务。

在之前的文章中分别介绍了harbor基于离线安装的高可用汲取设计和部署。那么,如果我们的harbor服务主机或者harbor服务及组件出现异常,我们该如何快速处理呢?

Harbor v2.2及以上版本支持配置Prometheus监控Harbor,所以你的harbor版本必须要大于2.2。

本篇文章以二进制的方式简单的部署Prometheus相关服务,可以帮助你快速的的实现Prometheus对harbor的监控。

Prometheus监控Harbor(二进制版)

一、部署说明

在harbor服务主机上部署:

prometheus node-exporter grafana alertmanager

harbor版本:2.4.2

主机:192.168.2.22

二、Harbor启用metrics服务

2.1 停止Harbor服务

$ cd /app/harbor

$ docker-compose down

2.2 修改harbor.yml配置

修改harbor的配置文件中metrics参数,启用harbor-exporter组件。

$ cat harbor.yml

### metrics配置部分

metric:

enabled: true #是否启用,需要修改为true(启用)

port: 9099 #默认的端口为9090,与prometheus的端口会冲突(所以需要修改下)

path: /metrics

对harbor不熟悉的建议对配置文件备份下!

2.3 配置注入组件

$ ./prepre

2.4 install安装harbor

$ ./install.sh --with-notary --with-trivy --with-chartmuseum

$ docker-compose ps

NAME COMMAND SERVICE STATUS PORTS

chartmuseum "./docker-entrypoint…" chartmuseum running (healthy)

harbor-core "/harbor/entrypoint.…" core running (healthy)

harbor-db "/docker-entrypoint.…" postgresql running (healthy)

harbor-exporter "/harbor/entrypoint.…" exporter running

可以看到多了harbor-exporter组件。

三、Harbor指标说明

在前面启用了harbor-exporter监控组件后,可以通过curl命令去查看harbor暴露了哪些指标。

harbor暴露了以下4个关键组件的指标数据。

3.1 harbor-exporter组件指标

exporter组件指标与Harbor 实例配置相关,并从 Harbor 数据库中收集一些数据。指标可在

<harbor_instance>:<metrics_port>/<metrics_path>查看

$ curl http://192.168.2.22:9099/metrics

1)harbor_project_total

harbor_project_total 采集了公共和私人项目总共数量。

$ curl http://192.168.2.22:9099/metrics | grep harbor_project_total

# HELP harbor_project_total Total projects number

# TYPE harbor_project_total gauge

harbor_project_total{public="true"} 1 # 公共项目的数量为“1”

harbor_project_total{public="false"} 1 #私有项目的数量

2)harbor_project_repo_total

项目(Project)中的存储库总数。

$ curl http://192.168.2.22:9099/metrics | grep harbor_project_repo_total

# HELP harbor_project_repo_total Total project repos number

# TYPE harbor_project_repo_total gauge

harbor_project_repo_total{project_name="library",public="true"} 0

3)harbor_project_member_total

项目中的成员总数

$ curl http://192.168.2.22:9099/metrics | grep harbor_project_member_total

# HELP harbor_project_member_total Total members number of a project

# TYPE harbor_project_member_total gauge

harbor_project_member_total{project_name="library"} 1 #项目library下有“1”个用户

4)harbor_project_quota_usage_byte

一个项目的总使用资源

$ curl http://192.168.2.22:9099/metrics | grep harbor_project_quota_usage_byte

# HELP harbor_project_quota_usage_byte The used resource of a project

# TYPE harbor_project_quota_usage_byte gauge

harbor_project_quota_usage_byte{project_name="library"} 0

5)harbor_project_quota_byte

项目中设置的配额

$ curl http://192.168.2.22:9099/metrics | grep harbor_project_quota_byte

# HELP harbor_project_quota_byte The quota of a project

# TYPE harbor_project_quota_byte gauge

harbor_project_quota_byte{project_name="library"} -1 #-1 表示不限制

6)harbor_artifact_pulled

项目中镜像拉取的总数

$ curl http://192.168.2.22:9099/metrics | grep harbor_artifact_pulled

# HELP harbor_artifact_pulled The pull number of an artifact

# TYPE harbor_artifact_pulled gauge

harbor_artifact_pulled{project_name="library"} 0

7)harbor_project_artifact_total

项目中的工件类型总数,artifact_type , project_name, public ( true, false)

$ curl http://192.168.2.22:9099/metrics | grep harbor_project_artifact_total

8)harbor_health

Harbor状态$ curl http://192.168.2.22:9099/metrics | grep harbor_health

# HELP harbor_health Running status of Harbor

# TYPE harbor_health gauge

harbor_health 1 #1表示正常,0表示异常

9)harbor_system_info

Harbor 实例的信息,auth_mode ( db_auth, ldap_auth, uaa_auth, http_auth, oidc_auth),harbor_version, self_registration( true, false)

$ curl http://192.168.2.22:9099/metrics | grep harbor_system_info

# HELP harbor_system_info Information of Harbor system

# TYPE harbor_system_info gauge

harbor_system_info{auth_mode="db_auth",harbor_version="v2.4.2-ef2e2e56",self_registration="false"} 1

10)harbor_up

Harbor组件运行状态,组件 ( chartmuseum, core, database, jobservice, portal, redis, registry, registryctl, trivy)

$ curl http://192.168.2.22:9099/metrics | grep harbor_up

harbor_up Running status of harbor component

# TYPE harbor_up gauge

harbor_up{component="chartmuseum"} 1

harbor_up{component="core"} 1

harbor_up{component="database"} 1

harbor_up{component="jobservice"} 1

harbor_up{component="portal"} 1

harbor_up{component="redis"} 1

harbor_up{component="registry"} 1

harbor_up{component="registryctl"} 1

harbor_up{component="trivy"} 1 #Trivy扫描器运行状态

11)harbor_task_queue_size

队列中每种类型的任务总数,

$ curl http://192.168.2.22:9099/metrics | grep harbor_task_queue_size

# HELP harbor_task_queue_size Total number of tasks

# TYPE harbor_task_queue_size gauge

harbor_task_queue_size{type="DEMO"} 0

harbor_task_queue_size{type="GARBAGE_COLLECTION"} 0

harbor_task_queue_size{type="IMAGE_GC"} 0

harbor_task_queue_size{type="IMAGE_REPLICATE"} 0

harbor_task_queue_size{type="IMAGE_SCAN"} 0

harbor_task_queue_size{type="IMAGE_SCAN_ALL"} 0

harbor_task_queue_size{type="P2P_PREHEAT"} 0

harbor_task_queue_size{type="REPLICATION"} 0

harbor_task_queue_size{type="RETENTION"} 0

harbor_task_queue_size{type="SCHEDULER"} 0

harbor_task_queue_size{type="SLACK"} 0

harbor_task_queue_size{type="WEBHOOK"} 0

12)harbor_task_queue_latency

多久前要处理的下一个作业按类型排入队列

$ curl http://192.168.2.22:9099/metrics | grep harbor_task_queue_latency

# HELP harbor_task_queue_latency how long ago the next job to be processed was enqueued

# TYPE harbor_task_queue_latency gauge

harbor_task_queue_latency{type="DEMO"} 0

harbor_task_queue_latency{type="GARBAGE_COLLECTION"} 0

harbor_task_queue_latency{type="IMAGE_GC"} 0

harbor_task_queue_latency{type="IMAGE_REPLICATE"} 0

harbor_task_queue_latency{type="IMAGE_SCAN"} 0

harbor_task_queue_latency{type="IMAGE_SCAN_ALL"} 0

harbor_task_queue_latency{type="P2P_PREHEAT"} 0

harbor_task_queue_latency{type="REPLICATION"} 0

harbor_task_queue_latency{type="RETENTION"} 0

harbor_task_queue_latency{type="SCHEDULER"} 0

harbor_task_queue_latency{type="SLACK"} 0

harbor_task_queue_latency{type="WEBHOOK"} 0

13)harbor_task_scheduled_total

计划任务数

$ curl http://192.168.2.22:9099/metrics | grep harbor_task_scheduled_total

# HELP harbor_task_scheduled_total total number of scheduled job

# TYPE harbor_task_scheduled_total gauge

harbor_task_scheduled_total 0

14)harbor_task_concurrency

池(Total)上每种类型的并发任务总数

$ curl http://192.168.2.22:9099/metrics | grep harbor_task_concurrency

harbor_task_concurrency{pool="d4053262b74f0a7b83bc6add",type="GARBAGE_COLLECTION"} 0

3.2 harbor-core组件指标

以下是从 Harbor core组件中提取的指标,获取格式:

<harbor_instance>:<metrics_port>/<metrics_path>?comp=core.

1)harbor_core_http_inflight_requests

请求总数,操作(Harbor API operationId中的值。一些遗留端点没有,因此标签值为)operationId``unknown

harbor-core组件的指标

$ curl http://192.168.2.22:9099/metrics?comp=core | grep harbor_core_http_inflight_requests

# HELP harbor_core_http_inflight_requests The total number of requests

# TYPE harbor_core_http_inflight_requests gauge

harbor_core_http_inflight_requests 0

2)harbor_core_http_request_duration_seconds

请求的持续时间,

方法 ( GET, POST, HEAD, PATCH, PUT), 操作 ( Harbor APIoperationId中的 值。一些遗留端点没有, 所以标签值为), 分位数operationId``unknown

$ curl http://192.168.2.22:9099/metrics?comp=core | grep harbor_core_http_request_duration_seconds

# HELP harbor_core_http_request_duration_seconds The time duration of the requests

# TYPE harbor_core_http_request_duration_seconds summary

harbor_core_http_request_duration_seconds{method="GET",operation="GetHealth",quantile="0.5"} 0.001797115

harbor_core_http_request_duration_seconds{method="GET",operation="GetHealth",quantile="0.9"} 0.010445204

harbor_core_http_request_duration_seconds{method="GET",operation="GetHealth",quantile="0.99"} 0.010445204

3)harbor_core_http_request_total

请求总数

方法(GET, POST, HEAD, PATCH, PUT),操作([Harbor API operationId中的 值。一些遗留端点没有,因此标签值为)operationId``unknown

$ curl http://192.168.2.22:9099/metrics?comp=core | grep harbor_core_http_request_total

# HELP harbor_core_http_request_total The total number of requests

# TYPE harbor_core_http_request_total counter

harbor_core_http_request_total{code="200",method="GET",operation="GetHealth"} 14

harbor_core_http_request_total{code="200",method="GET",operation="GetInternalconfig"} 1

harbor_core_http_request_total{code="200",method="GET",operation="GetPing"} 176

harbor_core_http_request_total{code="200",method="GET",operation="GetSystemInfo"} 14

3.3 registry 组件指标

注册表,以下是从 Docker 发行版中提取的指标,查看指标方式:

<harbor_instance>:<metrics_port>/<metrics_path>?comp=registry.

1)registry_http_in_flight_requests

进行中的 HTTP 请求,处理程序

$ curl http://192.168.2.22:9099/metrics?comp=registry | grep registry_http_in_flight_requests

# HELP registry_http_in_flight_requests The in-flight HTTP requests

# TYPE registry_http_in_flight_requests gauge

registry_http_in_flight_requests{handler="base"} 0

registry_http_in_flight_requests{handler="blob"} 0

registry_http_in_flight_requests{handler="blob_upload"} 0

registry_http_in_flight_requests{handler="blob_upload_chunk"} 0

registry_http_in_flight_requests{handler="catalog"} 0

registry_http_in_flight_requests{handler="manifest"} 0

registry_http_in_flight_requests{handler="tags"} 0

2)registry_http_request_duration_seconds

HTTP 请求延迟(以秒为单位),处理程序、方法( ,,,, GET) POST,文件HEADPATCHPUT

$ curl http://192.168.2.22:9099/metrics?comp=registry | grep registry_http_request_duration_seconds

3)registry_http_request_size_bytes

HTTP 请求大小(以字节为单位)。

$ curl http://192.168.2.22:9099/metrics?comp=registry | grep registry_http_request_size_bytes

3.4 jobservice组件指标

以下是从 Harbor Jobservice 提取的指标,

可在<harbor_instance>:<metrics_port>/<metrics_path>?comp=jobservice.查看

1)harbor_jobservice_info

Jobservice的信息,

$ curl http://192.168.2.22:9099/metrics?comp=jobservice | grep harbor_jobservice_info

# HELP harbor_jobservice_info the information of jobservice

# TYPE harbor_jobservice_info gauge

harbor_jobservice_info{node="f47de52e23b7:172.18.0.11",pool="35f1301b0e261d18fac7ba41",workers="10"} 1

2)harbor_jobservice_task_total

每个作业类型处理的任务数

$ curl http://192.168.2.22:9099/metrics?comp=jobservice | grep harbor_jobservice_task_tota

3)harbor_jobservice_task_process_time_seconds

任务处理时间的持续时间,即任务从开始执行到任务结束用了多少时间。

$ curl http://192.168.2.22:9099/metrics?comp=jobservice | grep harbor_jobservice_task_process_time_seconds

四、部署Prometheus Server(二进制)

4.1 创建安装目录

$ mkdir /etc/prometheus

4.2 下载安装包

$ wget https://github.com/prometheus/prometheus/releases/download/v2.36.2/prometheus-2.36.2.linux-amd64.tar.gz -c

$ tar zxvf prometheus-2.36.2.linux-amd64.tar.gz -C /etc/prometheus

$ cp prometheus-2.36.2.linux-amd64/{prometheus,promtool} /usr/local/bin/

$ prometheus --version #查看版本

prometheus, version 2.36.2 (branch: HEAD, revision: d7e7b8e04b5ecdc1dd153534ba376a622b72741b)

build user: root@f051ce0d6050

build date: 20220620-13:21:35

go version: go1.18.3

platform: linux/amd64

4.3 修改配置文件

在prometheus的配置文件中指定获取harbor采集的指标数据。

$ cp prometheus-2.36.2.linux-amd64/prometheus.yml /etc/prometheus/

$ cat <<EOF > /etc/prometheus/prometheus.yml

global:

scrape_interval: 15s

evaluation_interval: 15s

## 指定Alertmanagers地址

alerting:

alertmanagers:

- static_configs:

- targets: ["192.168.2.10:9093"] #填写Alertmanagers地址

## 配置告警规则文件

rule_files: #指定告警规则

- /etc/prometheus/rules.yml

scrape_configs:

- job_name: "prometheus"

static_configs:

- targets: ["localhost:9090"]

- job_name: 'node-exporter'

static_configs:

- targets:

- '192.168.2.22:9100'

- job_name: "harbor-exporter"

scrape_interval: 20s

static_configs:

- targets: ['192.168.2.22:9099']

- job_name: 'harbor-core'

params:

comp: ['core']

static_configs:

- targets: ['192.168.2.22:9099']

- job_name: 'harbor-registry'

params:

comp: ['registry']

static_configs:

- targets: ['192.168.2.22:9099']

- job_name: 'harbor-jobservice'

params:

comp: ['jobservice']

static_configs:

- targets: ['192.168.2.22:9099']

EOF

4.4 语法检查

检测配置文件的语法是否正确!

$ promtool check config /etc/prometheus/prometheus.yml

Checking /etc/prometheus/prometheus.yml

SUCCESS: /etc/prometheus/prometheus.yml is valid prometheus config file syntax

Checking /etc/prometheus/rules.yml

SUCCESS: 6 rules found

4.5 创建服务启动文件

$ cat <<EOF > /usr/lib/systemd/system/prometheus.service

[Unit]

Description=Prometheus Service

Documentation=https://prometheus.io/docs/introduction/overview/

wants=network-online.target

After=network-online.target

[Service]

Type=simple

User=root

Group=root

ExecStart=/usr/local/bin/prometheus --config.file=/etc/prometheus/prometheus.yml

[Install]

WantedBy=multi-user.target

EOF

4.6 启动服务

$ systemctl daemon-reload

$ systemctl enable --now prometheus.service

$ systemctl status prometheus.service

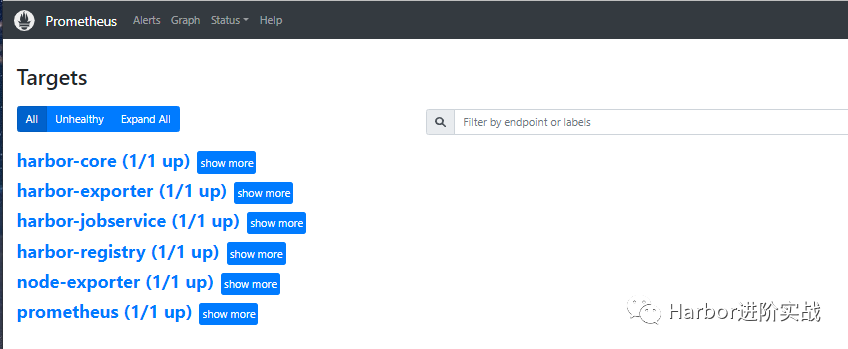

4.7 浏览器访问Prometheus UI

在浏览器地址栏输入主机IP:9090访问Prometheus UI 管理界面。

五、部署node-exporter

node-exporter服务可采集主机的cpu、内存、磁盘等资源指标。

5.1 下载安装包

$ wget https://github.com/prometheus/node_exporter/releases/download/v1.2.2/node_exporter-1.2.2.linux-amd64.tar.gz

$ tar zxvf node_exporter-1.2.2.linux-amd64.tar.gz

$ cp node_exporter-1.2.2.linux-amd64/node_exporter /usr/local/bin/

$ node_exporter --version

node_exporter, version 1.2.2 (branch: HEAD, revision: 26645363b486e12be40af7ce4fc91e731a33104e)

build user: root@b9cb4aa2eb17

build date: 20210806-13:44:18

go version: go1.16.7

platform: linux/amd64

5.2 创建服务启动文件

$ cat <<EOF > /usr/lib/systemd/system/node-exporter.service

[Unit]

Description=Prometheus Node Exporter

After=network.target

[Service]

ExecStart=/usr/local/bin/node_exporter

#User=prometheus

[Install]

WantedBy=multi-user.target

EOF

5.3 启动服务

$ systemctl daemon-reload

$ systemctl enable --now node-exporter.service

$ systemctl status node-exporter.service

$ ss -ntulp | grep node_exporter

tcp LISTEN 0 128 :::9100 :::* users:(("node_exporter",pid=36218,fd=3)

5.4 查看node指标

通过curl获取node-exporter服务采集到的监控数据。

$ curl http://localhost:9100/metrics

六、Grafana部署与仪表盘设计

二进制部署Grafana v8.4.4服务。

6.1 下载安装包

$ wget https://dl.grafana.com/enterprise/release/grafana-enterprise-8.4.4.linux-amd64.tar.gz -c

$ tar zxvf grafana-enterprise-8.4.4.linux-amd64.tar.gz -C /etc/

$ mv /etc/grafana-8.4.4 /etc/grafana

$ cp -a /etc/grafana/bin/{grafana-cli,grafana-server} /usr/local/bin/

#安装依赖包

$ yum install -y fontpackages-filesystem.noarch libXfont libfontenc lyx-fonts.noarch xorg-x11-font-utils

6.2 安装插件

安装grafana时钟插件

$ grafana-cli plugins install grafana-clock-panel

安装Zabbix插件

$ grafana-cli plugins install alexanderzobnin-zabbix-app

安装服务器端图像渲染组件

$ yum install -y fontconfig freetype* urw-fonts

6.3 创建服务启动文件

$ cat <<EOF >/usr/lib/systemd/system/grafana.service

[Service]

Type=notify

ExecStart=/usr/local/bin/grafana-server -homepath /etc/grafana

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

-homepath:指定grafana的工作目录

6.4 启动grafana服务

$ systemctl daemon-reload

$ systemctl enable --now grafana.service

$ systemctl status grafana.service

$ ss -ntulp | grep grafana-server

tcp LISTEN 0 128 :::3000 :::* users:(("grafana-server",pid=120140,fd=9))

6.5 配置数据源

在浏览器地址栏输入主机IP和grafana服务端口访问Grafana UI界面后,添加Prometheus数据源。

默认用户密码:admin/admin

6.6 导入json模板

一旦您配置了Prometheus服务器以收集您的 Harbor 指标,您就可以使用 Grafana来可视化您的数据。Harbor 存储库中提供了一个 示例 Grafana 仪表板,可帮助您开始可视化 Harbor 指标。

Harbor官方提供了一个grafana的json文件模板。下载:

https://github.com/goharbor/harbor/blob/main/contrib/grafana-dashborad/metrics-example.json

七、部署AlertManager服务(扩展)

Alertmanager是一个独立的告警模块,接收Prometheus等客户端发来的警报,之后通过分组、删除重复等处理,并将它们通过路由发送给正确的接收器;

7.1 下载安装包

$ wget https://github.com/prometheus/alertmanager/releases/download/v0.23.0/alertmanager-0.23.0.linux-amd64.tar.gz

$ tar zxvf alertmanager-0.23.0.linux-amd64.tar.gz

$ cp alertmanager-0.23.0.linux-amd64/{alertmanager,amtool} /usr/local/bin/

7.2 修改配置文件

$ mkdir /etc/alertmanager

$ cat /etc/alertmanager/alertmanager.yml

global:

resolve_timeout: 5m

route:

group_by: ['alertname']

group_wait: 10s

group_interval: 10s

repeat_interval: 1h

receiver: 'web.hook'

receivers:

- name: 'web.hook'

webhook_configs:

- url: 'http://127.0.0.1:5001/'

inhibit_rules:

- source_match:

severity: 'critical'

target_match:

severity: 'warning'

equal: ['alertname', 'dev', 'instance']

7.3 创建服务启动文件

$ cat <<EOF >/usr/lib/systemd/system/alertmanager.service

[Unit]

Description=alertmanager

fter=network.target

[Service]

ExecStart=/usr/local/bin/alertmanager --config.file=/etc/alertmanager/alertmanager.yml

ExecReload=/bin/kill -HUP $MAINPID

KillMode=process

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

7.4 启动服务

$ systemctl daemon-reload

$ systemctl enable --now alertmanager.service

$ systemctl status alertmanager.service

$ ss -ntulp | grep alertmanager

7.5 配置告警规则

前面在Prometheus server的配置文件中中指定了告警规则的文件为/etc/prometheus/rules.yml。

$ cat /etc/prometheus/rules.yml

groups:

- name: Warning

rules:

- alert: NodeMemoryUsage

expr: 100 - (node_memory_MemFree_bytes + node_memory_Cached_bytes + node_memory_Buffers_bytes) / node_memory_MemTotal_bytes*100 > 80

for: 1m

labels:

status: Warning

annotations:

summary: "{{$labels.instance}}: 内存使用率过高"

description: "{{$labels.instance}}: 内存使用率大于 80% (当前值: {{ $value }}"

- alert: NodeCpuUsage

expr: (1-((sum(increase(node_cpu_seconds_total{mode="idle"}[1m])) by (instance)) / (sum(increase(node_cpu_seconds_total[1m])) by (instance)))) * 100 > 70

for: 1m

labels:

status: Warning

annotations:

summary: "{{$labels.instance}}: CPU使用率过高"

description: "{{$labels.instance}}: CPU使用率大于 70% (当前值: {{ $value }}"

- alert: NodeDiskUsage

expr: 100 - node_filesystem_free_bytes{fstype=~"xfs|ext4"} / node_filesystem_size_bytes{fstype=~"xfs|ext4"} * 100 > 80

for: 1m

labels:

status: Warning

annotations:

summary: "{{$labels.instance}}: 分区使用率过高"

description: "{{$labels.instance}}: 分区使用大于 80% (当前值: {{ $value }}"

- alert: Node-UP

expr: up{job='node-exporter'} == 0

for: 1m

labels:

status: Warning

annotations:

summary: "{{$labels.instance}}: 服务宕机"

description: "{{$labels.instance}}: 服务中断超过1分钟"

- alert: TCP

expr: node_netstat_Tcp_CurrEstab > 1000

for: 1m

labels:

status: Warning

annotations:

summary: "{{$labels.instance}}: TCP连接过高"

description: "{{$labels.instance}}: 连接大于1000 (当前值: {{$value}})"

- alert: IO

expr: 100 - (avg(irate(node_disk_io_time_seconds_total[1m])) by(instance)* 100) < 60

for: 1m

labels:

status: Warning

annotations:

summary: "{{$labels.instance}}: 流入磁盘IO使用率过高"

description: "{{$labels.instance}}:流入磁盘IO大于60% (当前值:{{$value}})"