ES不香吗,为啥还要ClickHouse?

点击关注公众号,Java干货及时送达

作者:Gang Tao 编辑:陶家龙

来源:zhuanlan.zhihu.com/p/353296392

Elasticsearch是一个实时的分布式搜索分析引擎,它的底层是构建在 Lucene 之上的。简单来说是通过扩展 Lucene 的搜索能力,使其具有分布式的功能。

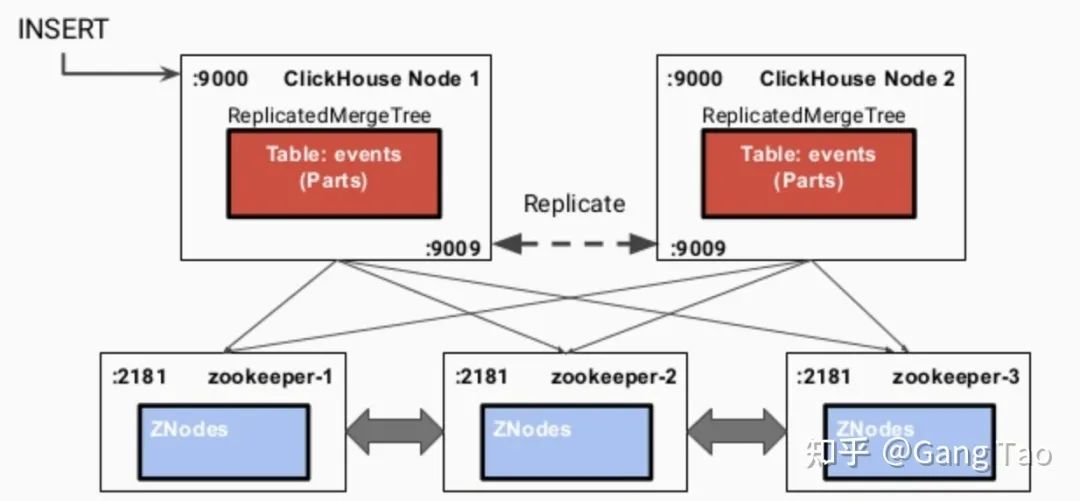

架构和设计的对比

Client Node,负责 API 和数据的访问的节点,不存储/处理数据。

Data Node,负责数据的存储和索引。

Master Node,管理节点,负责 Cluster 中的节点的协调,不存储数据。

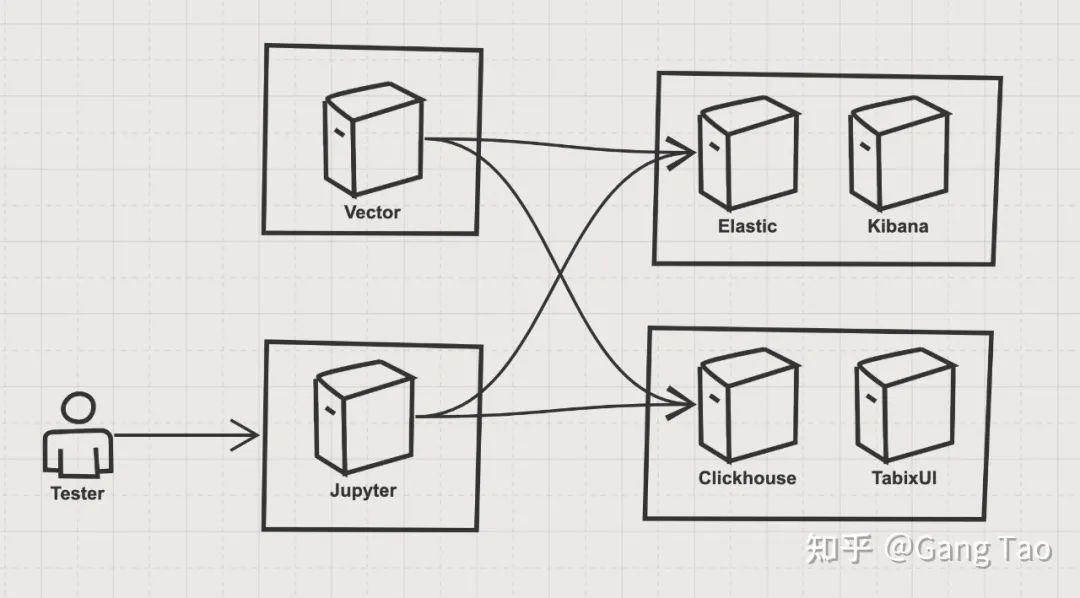

查询对比实战

https://github.com/gangtao/esvsch

version: '3.7'

services:

elasticsearch:

image: docker.elastic.co/elasticsearch/elasticsearch:7.4.0

container_name: elasticsearch

environment:

- xpack.security.enabled=false

- discovery.type=single-node

ulimits:

memlock:

soft: -1

hard: -1

nofile:

soft: 65536

hard: 65536

cap_add:

- IPC_LOCK

volumes:

- elasticsearch-data:/usr/share/elasticsearch/data

ports:

- 9200:9200

- 9300:9300

deploy:

resources:

limits:

cpus: '4'

memory: 4096M

reservations:

memory: 4096M

kibana:

container_name: kibana

image: docker.elastic.co/kibana/kibana:7.4.0

environment:

- ELASTICSEARCH_HOSTS=http://elasticsearch:9200

ports:

- 5601:5601

depends_on:

- elasticsearch

volumes:

elasticsearch-data:

driver: local

version: "3.7"

services:

clickhouse:

container_name: clickhouse

image: yandex/clickhouse-server

volumes:

- ./data/config:/var/lib/clickhouse

ports:

- "8123:8123"

- "9000:9000"

- "9009:9009"

- "9004:9004"

ulimits:

nproc: 65535

nofile:

soft: 262144

hard: 262144

healthcheck:

test: ["CMD", "wget", "--spider", "-q", "localhost:8123/ping"]

interval: 30s

timeout: 5s

retries: 3

deploy:

resources:

limits:

cpus: '4'

memory: 4096M

reservations:

memory: 4096M

tabixui:

container_name: tabixui

image: spoonest/clickhouse-tabix-web-client

environment:

- CH_NAME=dev

- CH_HOST=127.0.0.1:8123

- CH_LOGIN=default

ports:

- "18080:80"

depends_on:

- clickhouse

deploy:

resources:

limits:

cpus: '0.1'

memory: 128M

reservations:

memory: 128M

CREATE TABLE default.syslog(

application String,

hostname String,

message String,

mid String,

pid String,

priority Int16,

raw String,

timestamp DateTime('UTC'),

version Int16

) ENGINE = MergeTree()

PARTITION BY toYYYYMMDD(timestamp)

ORDER BY timestamp

TTL timestamp + toIntervalMonth(1);

[sources.in]

type = "generator"

format = "syslog"

interval = 0.01

count = 100000

[transforms.clone_message]

type = "add_fields"

inputs = ["in"]

fields.raw = "{{ message }}"

[transforms.parser]

# General

type = "regex_parser"

inputs = ["clone_message"]

field = "message" # optional, default

patterns = ['^<(?P\d*)>(?P \d) (?P \d{4}-\d{2}-\d{2}T\d{2}:\d{2}:\d{2}\.\d{3}Z) (?P \w+\.\w+) (?P \w+) (?P \d+) (?P ID\d+) - (?P .*)$']

[transforms.coercer]

type = "coercer"

inputs = ["parser"]

types.timestamp = "timestamp"

types.version = "int"

types.priority = "int"

[sinks.out_console]

# General

type = "console"

inputs = ["coercer"]

target = "stdout"

# Encoding

encoding.codec = "json"

[sinks.out_clickhouse]

host = "http://host.docker.internal:8123"

inputs = ["coercer"]

table = "syslog"

type = "clickhouse"

encoding.only_fields = ["application", "hostname", "message", "mid", "pid", "priority", "raw", "timestamp", "version"]

encoding.timestamp_format = "unix"

[sinks.out_es]

# General

type = "elasticsearch"

inputs = ["coercer"]

compression = "none"

endpoint = "http://host.docker.internal:9200"

index = "syslog-%F"

# Encoding

# Healthcheck

healthcheck.enabled = true

source.in:生成 syslog 的模拟数据,生成 10w 条,生成间隔和 0.01 秒。

transforms.clone_message:把原始消息复制一份,这样抽取的信息同时可以保留原始消息。

transforms.parser:使用正则表达式,按照 syslog 的定义,抽取出 application,hostname,message,mid,pid,priority,timestamp,version 这几个字段。

transforms.coercer:数据类型转化。

sinks.out_console:把生成的数据打印到控制台,供开发调试。

sinks.out_clickhouse:把生成的数据发送到Clickhouse。

sinks.out_es:把生成的数据发送到 ES。

运行 Docker 命令,执行该流水线:

docker run \

-v $(mkfile_path)/vector.toml:/etc/vector/vector.toml:ro \

-p 18383:8383 \

timberio/vector:nightly-alpine

# ES

{

"query":{

"match_all":{}

}

}

# Clickhouse

"SELECT * FROM syslog"

# ES

{

"query":{

"match":{

"hostname":"for.org"

}

}

}

# Clickhouse

"SELECT * FROM syslog WHERE hostname='for.org'"

# ES

{

"query":{

"multi_match":{

"query":"up.com ahmadajmi",

"fields":[

"hostname",

"application"

]

}

}

}

# Clickhouse、

"SELECT * FROM syslog WHERE hostname='for.org' OR application='ahmadajmi'"

# ES

{

"query":{

"term":{

"message":"pretty"

}

}

}

# Clickhouse

"SELECT * FROM syslog WHERE lowerUTF8(raw) LIKE '%pretty%'"

# ES

{

"query":{

"range":{

"version":{

"gte":2

}

}

}

}

# Clickhouse

"SELECT * FROM syslog WHERE version >= 2"

# ES

{

"query":{

"exists":{

"field":"application"

}

}

}

# Clickhouse

"SELECT * FROM syslog WHERE application is not NULL"

# ES

{

"query":{

"regexp":{

"hostname":{

"value":"up.*",

"flags":"ALL",

"max_determinized_states":10000,

"rewrite":"constant_score"

}

}

}

}

# Clickhouse

"SELECT * FROM syslog WHERE match(hostname, 'up.*')"

# ES

{

"aggs":{

"version_count":{

"value_count":{

"field":"version"

}

}

}

}

# Clickhouse

"SELECT count(version) FROM syslog"

# ES

{

"aggs":{

"my-agg-name":{

"cardinality":{

"field":"priority"

}

}

}

}

# Clickhouse

"SELECT count(distinct(priority)) FROM syslog "

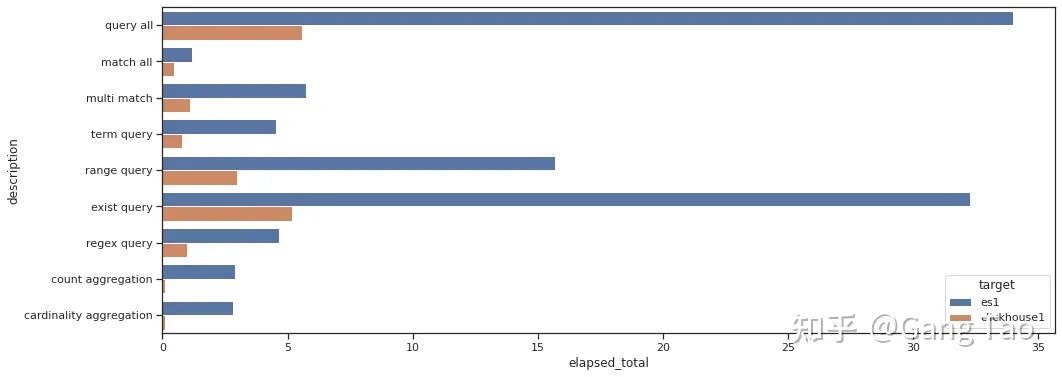

总结

往 期 推 荐

1、Log4j2维护者吐槽没工资还要挨骂,GO安全负责人建议开源作者向公司收费

4、Windows重要功能被阉割,全球用户怒喷数月后微软终于悔改

点分享

点收藏

点点赞

点在看