OpenCV4 部署DeepLabv3+模型

点击上方“小白学视觉”,选择加"星标"或“置顶”

重磅干货,第一时间送达

本文转自:opencv学堂

前面说了OpenCV DNN不光支持图像分类与对象检测模型。此外还支持各种自定义的模型,deeplabv3模型是图像语义分割常用模型之一,本文我们演示OpenCV DNN如何调用Deeplabv3模型实现图像语义分割,支持的backbone网络分别为MobileNet与Inception。预训练模型下载地址如下:

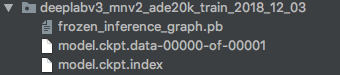

https://github.com/tensorflow/models/blob/master/research/deeplab/g3doc/model_zoo.md预训练的模型下载之后可以看到pb文件,ckpt文件,其中pb文件可以直接调用。

下载MobileNet版本的deeplabv3模型,把mobilenetv2 ckpt转pb,脚本如下:

python deeplab/export_model.py \

--logtostderr \

--checkpoint_path="/home/lw/data/cityscapes/train/model.ckpt-2000" \

--export_path="/home/lw/data/pb/frozen_inference_graph.pb" \

--model_variant="mobilenet_v2" \

#--atrous_rates=6 \

#--atrous_rates=12 \

#--atrous_rates=18 \

#--output_stride=16 \

--decoder_output_stride=4 \

--num_classes=6 \

--crop_size=513 \

--crop_size=513 \

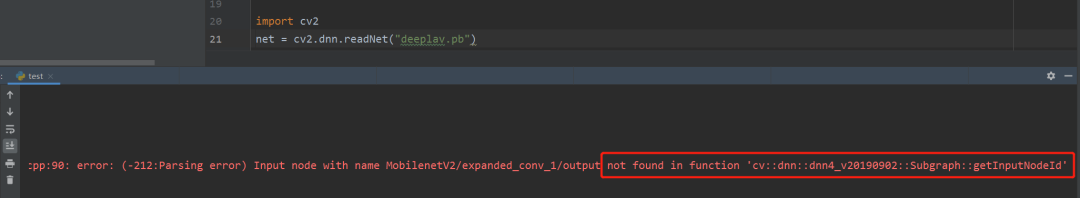

--inference_scales=1.0接下来使用opencv加载mobilenetv2转换好的pb模型会报下面的错误:

使用mobilenetv2的解决办法:

import tensorflow as tf

from tensorflow.tools.graph_transforms import TransformGraph

from tensorflow.python.tools import optimize_for_inference_lib

graph = 'frozen_inference_graph.pb'

with tf.gfile.FastGFile(graph, 'rb') as f:

graph_def = tf.GraphDef()

graph_def.ParseFromString(f.read())

tf.summary.FileWriter('logs', graph_def)

inp_node = 'MobilenetV2/MobilenetV2/input'

out_node = 'logits/semantic/BiasAdd'

graph_def = optimize_for_inference_lib.optimize_for_inference(graph_def, [inp_node], [out_node],

tf.float32.as_datatype_enum)

graph_def = TransformGraph(graph_def, [inp_node], [out_node], ["sort_by_execution_order"])

with tf.gfile.FastGFile('frozen_inference_graph_opt.pb', 'wb') as f:

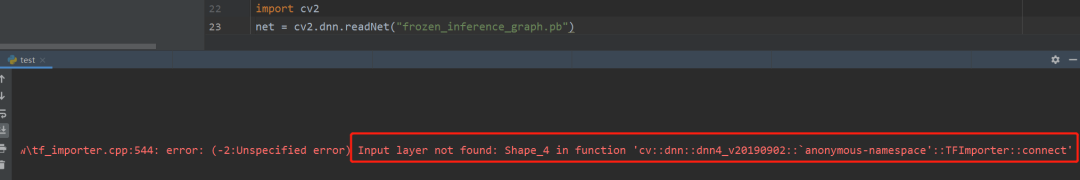

f.write(graph_def.SerializeToString()) 使用xception的解决办法

使用xception的解决办法

import tensorflow as tf

from tensorflow.tools.graph_transforms import TransformGraph

from tensorflow.python.tools import optimize_for_inference_lib

graph = 'frozen_inference_graph.pb'

with tf.gfile.FastGFile(graph, 'rb') as f:

graph_def = tf.GraphDef()

graph_def.ParseFromString(f.read())

tf.summary.FileWriter('logs', graph_def)

# inp_node = 'sub_2' # 起始地节点

# out_node = 'logits/semantic/BiasAdd' # 结束的节点

graph_def = optimize_for_inference_lib.optimize_for_inference(graph_def, [inp_node], [out_node],

tf.float32.as_datatype_enum)

graph_def = TransformGraph(graph_def, [inp_node], [out_node], ["sort_by_execution_order"])

with tf.gfile.FastGFile('frozen_inference_graph_opt.pb', 'wb') as f:

f.write(graph_def.SerializeToString())import cv2

import numpy as np

np.random.seed(0)

color = np.random.randint(0, 255, size=[150, 3])

print(color)

# Load names of classes

#classes = None

#with open("labels.names", 'rt') as f:

# classes = f.read().rstrip('\n').split('\n')

#legend = None

#def showLegend(classes):

# global legend

# if not classes is None and legend is None:

# blockHeight = 30

# print(len(classes), len(colors))

# assert(len(classes) == len(colors))

# legend = np.zeros((blockHeight * len(colors), 200, 3), np.uint8)

# for i in range(len(classes)):

# block = legend[i * blockHeight:(i + 1) * blockHeight]

# block[:, :] = colors[i]

# cv2.putText(block, classes[i], (0, blockHeight//2), cv2.FONT_HERSHEY_SIMPLEX, 0.5, (255, 255, 255))

# cv2.namedWindow('Legend', cv2.WINDOW_NORMAL)

# cv2.imshow('Legend', legend)

# cv2.waitKey()

# 读取图片

frame = cv2.imread("1.jpg")

frameHeight = frame.shape[0]

frameWidth = frame.shape[1]

# 加载模型

net = cv2.dnn.readNet("frozen_inference_graph_opt.pb")

blob = cv2.dnn.blobFromImage(frame, 0.007843, (513, 513), (127.5, 127.5, 127.5), swapRB=True)

net.setInput(blob)

score = net.forward()

numClasses = score.shape[1]

height = score.shape[2]

width = score.shape[3]

classIds = np.argmax(score[0], axis=0) # 在列上求最大的值的索引

segm = np.stack([color[idx] for idx in classIds.flatten()])

segm = segm.reshape(height, width, 3)

segm = cv2.resize(segm, (frameWidth, frameHeight), interpolation=cv2.INTER_NEAREST)

frame = (0.3*frame + 0.8*segm).astype(np.uint8)

#showLegend(classes)

cv2.imshow("img", frame)

cv2.waitKey()

交流群

欢迎加入公众号读者群一起和同行交流,目前有SLAM、三维视觉、传感器、自动驾驶、计算摄影、检测、分割、识别、医学影像、GAN、算法竞赛等微信群(以后会逐渐细分),请扫描下面微信号加群,备注:”昵称+学校/公司+研究方向“,例如:”张三 + 上海交大 + 视觉SLAM“。请按照格式备注,否则不予通过。添加成功后会根据研究方向邀请进入相关微信群。请勿在群内发送广告,否则会请出群,谢谢理解~

评论