MyBatis批量插入几千条数据,请慎用foreach

近日,项目中有一个耗时较长的Job存在CPU占用过高的问题,经排查发现,主要时间消耗在往MyBatis中批量插入数据。mapper configuration是用foreach循环做的,差不多是这样。(由于项目保密,以下代码均为自己手写的demo代码)

<insert id="batchInsert" parameterType="java.util.List">

insert into USER (id, name) values

<foreach collection="list" item="model" index="index" separator=",">

(#{model.id}, #{model.name})

foreach>

insert>

这个方法提升批量插入速度的原理是,将传统的:

INSERT INTO `table1` (`field1`, `field2`) VALUES ("data1", "data2");

INSERT INTO `table1` (`field1`, `field2`) VALUES ("data1", "data2");

INSERT INTO `table1` (`field1`, `field2`) VALUES ("data1", "data2");

INSERT INTO `table1` (`field1`, `field2`) VALUES ("data1", "data2");

INSERT INTO `table1` (`field1`, `field2`) VALUES ("data1", "data2");

转化为:

INSERT INTO `table1` (`field1`, `field2`)

VALUES ("data1", "data2"),

("data1", "data2"),

("data1", "data2"),

("data1", "data2"),

("data1", "data2");

Of course don't combine ALL of them, if the amount is HUGE. Say you have 1000 rows you need to insert, then don't do it one at a time. You shouldn't equally try to have all 1000 rows in a single query. Instead break it into smaller sizes.

Insert inside Mybatis foreach is not batch, this is a single (could become giant) SQL statement and that brings drawbacks:

some database such as Oracle here does not support. in relevant cases: there will be a large number of records to insert and the database configured limit (by default around 2000 parameters per statement) will be hit, and eventually possibly DB stack error if the statement itself become too large.

Iteration over the collection must not be done in the mybatis XML. Just execute a simple Insertstatement in a Java Foreach loop. The most important thing is the session Executor type.

SqlSession session = sessionFactory.openSession(ExecutorType.BATCH);

for (Model model : list) {

session.insert("insertStatement", model);

}

session.flushStatements();

Unlike default ExecutorType.SIMPLE, the statement will be prepared once and executed for each record to insert.

从资料中可知,默认执行器类型为Simple,会为每个语句创建一个新的预处理语句,也就是创建一个PreparedStatement对象。在我们的项目中,会不停地使用批量插入这个方法,而因为MyBatis对于含有

Internally, it still generates the same single insert statement with many placeholders as the JDBC code above.

MyBatis has an ability to cache PreparedStatement, but this statement cannot be cached because it contains

element and the statement varies depending on the parameters. As a result, MyBatis has to 1) evaluate the foreach part and 2) parse the statement string to build parameter mapping [1] on every execution of this statement.And these steps are relatively costly process when the statement string is big and contains many placeholders.

[1] simply put, it is a mapping between placeholders and the parameters.

从上述资料可知,耗时就耗在,由于我foreach后有5000+个values,所以这个PreparedStatement特别长,包含了很多占位符,对于占位符和参数的映射尤其耗时。并且,查阅相关资料可知,values的增长与所需的解析时间,是呈指数型增长的。

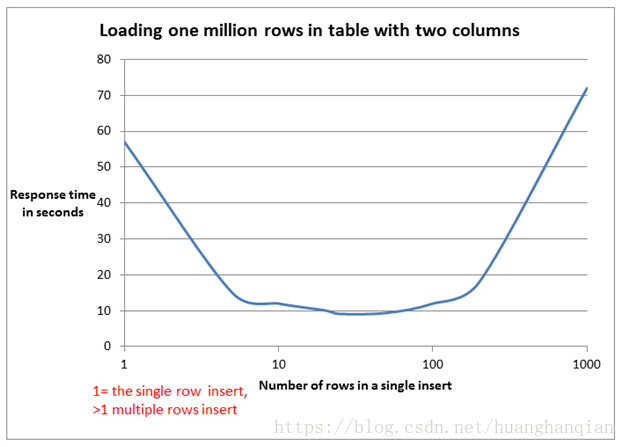

所以,如果非要使用 foreach 的方式来进行批量插入的话,可以考虑减少一条 insert 语句中 values 的个数,最好能达到上面曲线的最底部的值,使速度最快。一般按经验来说,一次性插20~50行数量是比较合适的,时间消耗也能接受。

重点来了。上面讲的是,如果非要用

SqlSession session = sqlSessionFactory.openSession(ExecutorType.BATCH);

try {

SimpleTableMapper mapper = session.getMapper(SimpleTableMapper.class);

List records = getRecordsToInsert(); // not shown

BatchInsert batchInsert = insert(records)

.into(simpleTable)

.map(id).toProperty("id")

.map(firstName).toProperty("firstName")

.map(lastName).toProperty("lastName")

.map(birthDate).toProperty("birthDate")

.map(employed).toProperty("employed")

.map(occupation).toProperty("occupation")

.build()

.render(RenderingStrategy.MYBATIS3);

batchInsert.insertStatements().stream().forEach(mapper::insert);

session.commit();

} finally {

session.close();

}

即基本思想是将 MyBatis session 的 executor type 设为 Batch ,然后多次执行插入语句。就类似于JDBC的下面语句一样。

Connection connection = DriverManager.getConnection("jdbc:mysql://127.0.0.1:3306/mydb?useUnicode=true&characterEncoding=UTF-8&useServerPrepStmts=false&rewriteBatchedStatements=true","root","root");

connection.setAutoCommit(false);

PreparedStatement ps = connection.prepareStatement(

"insert into tb_user (name) values(?)");

for (int i = 0; i < stuNum; i++) {

ps.setString(1,name);

ps.addBatch();

}

ps.executeBatch();

connection.commit();

connection.close();

经过试验,使用了 ExecutorType.BATCH 的插入方式,性能显著提升,不到 2s 便能全部插入完成。

总结一下,如果MyBatis需要进行批量插入,推荐使用 ExecutorType.BATCH 的插入方式,如果非要使用

https://dev.mysql.com/doc/refman/5.6/en/insert-optimization.html https://stackoverflow.com/questions/19682414/how-can-mysql-insert-millions-records-fast https://stackoverflow.com/questions/32649759/using-foreach-to-do-batch-insert-with-mybatis/40608353 https://blog.csdn.net/wlwlwlwl015/article/details/50246717 http://blog.harawata.net/2016/04/bulk-insert-multi-row-vs-batch-using.html https://www.red-gate.com/simple-talk/sql/performance/comparing-multiple-rows-insert-vs-single-row-insert-with-three-data-load-methods/ https://stackoverflow.com/questions/7004390/java-batch-insert-into-mysql-very-slow http://www.mybatis.org/mybatis-dynamic-sql/docs/insert.html

END

推荐阅读 一键生成Springboot & Vue项目!【毕设神器】

Java可视化编程工具系列(一)

Java可视化编程工具系列(二)

顺便给大家推荐一个GitHub项目,这个 GitHub 整理了上千本常用技术PDF,绝大部分核心的技术书籍都可以在这里找到,

GitHub地址:https://github.com/javadevbooks/books

Gitee地址:https://gitee.com/javadevbooks/books

电子书已经更新好了,你们需要的可以自行下载了,记得点一个star,持续更新中..